There are two main issues with distributed knowledge methods. The second is out-of-order messages, the primary is duplicate messages, the third is off-by-one errors, and the primary is duplicate messages.

This joke impressed Rockset to confront the info duplication situation by way of a course of we name deduplication.

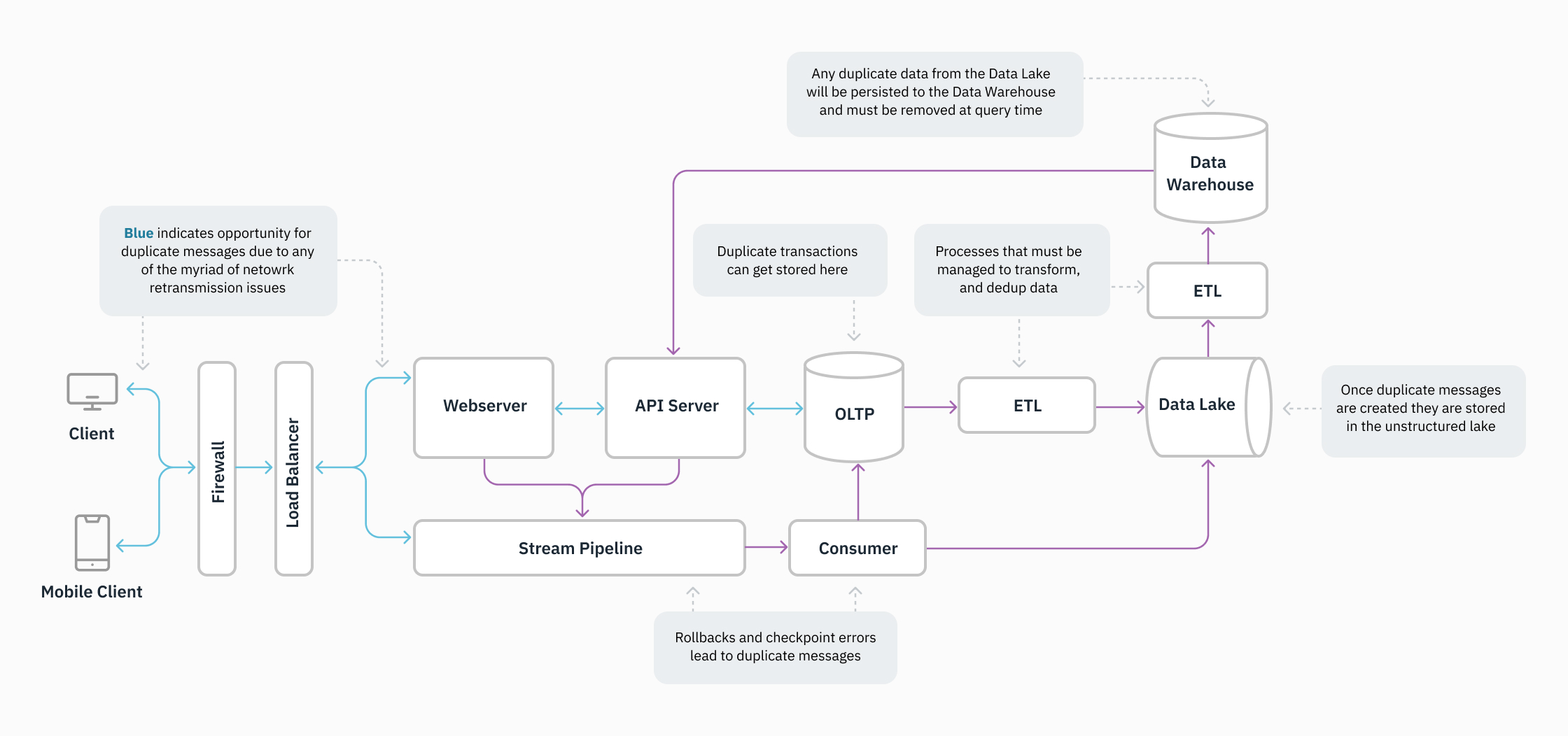

As knowledge methods develop into extra advanced and the variety of methods in a stack will increase, knowledge deduplication turns into more difficult. That is as a result of duplication can happen in a mess of how. This weblog put up discusses knowledge duplication, the way it plagues groups adopting real-time analytics, and the deduplication options Rockset supplies to resolve the duplication situation. At any time when one other distributed knowledge system is added to the stack, organizations develop into weary of the operational tax on their engineering group.

Rockset addresses the problem of information duplication in a easy method, and helps to free groups of the complexities of deduplication, which incorporates untangling the place duplication is going on, establishing and managing extract rework load (ETL) jobs, and making an attempt to unravel duplication at a question time.

The Duplication Drawback

In distributed methods, messages are handed forwards and backwards between many employees, and it’s widespread for messages to be generated two or extra occasions. A system could create a reproduction message as a result of:

- A affirmation was not despatched.

- The message was replicated earlier than it was despatched.

- The message affirmation comes after a timeout.

- Messages are delivered out of order and should be resent.

The message could be acquired a number of occasions with the identical info by the point it arrives at a database administration system. Due to this fact, your system should be certain that duplicate information aren’t created. Duplicate information could be expensive and take up reminiscence unnecessarily. These duplicated messages should be consolidated right into a single message.

Deduplication Options

Earlier than Rockset, there have been three normal deduplication strategies:

- Cease duplication earlier than it occurs.

- Cease duplication throughout ETL jobs.

- Cease duplication at question time.

Deduplication Historical past

Kafka was one of many first methods to create an answer for duplication. Kafka ensures {that a} message is delivered as soon as and solely as soon as. Nonetheless, if the issue happens upstream from Kafka, their system will see these messages as non-duplicates and ship the duplicate messages with completely different timestamps. Due to this fact, precisely as soon as semantics don’t all the time resolve duplication points and may negatively affect downstream workloads.

Cease Duplication Earlier than it Occurs

Some platforms try and cease duplication earlier than it occurs. This appears best, however this methodology requires troublesome and expensive work to establish the situation and causes of the duplication.

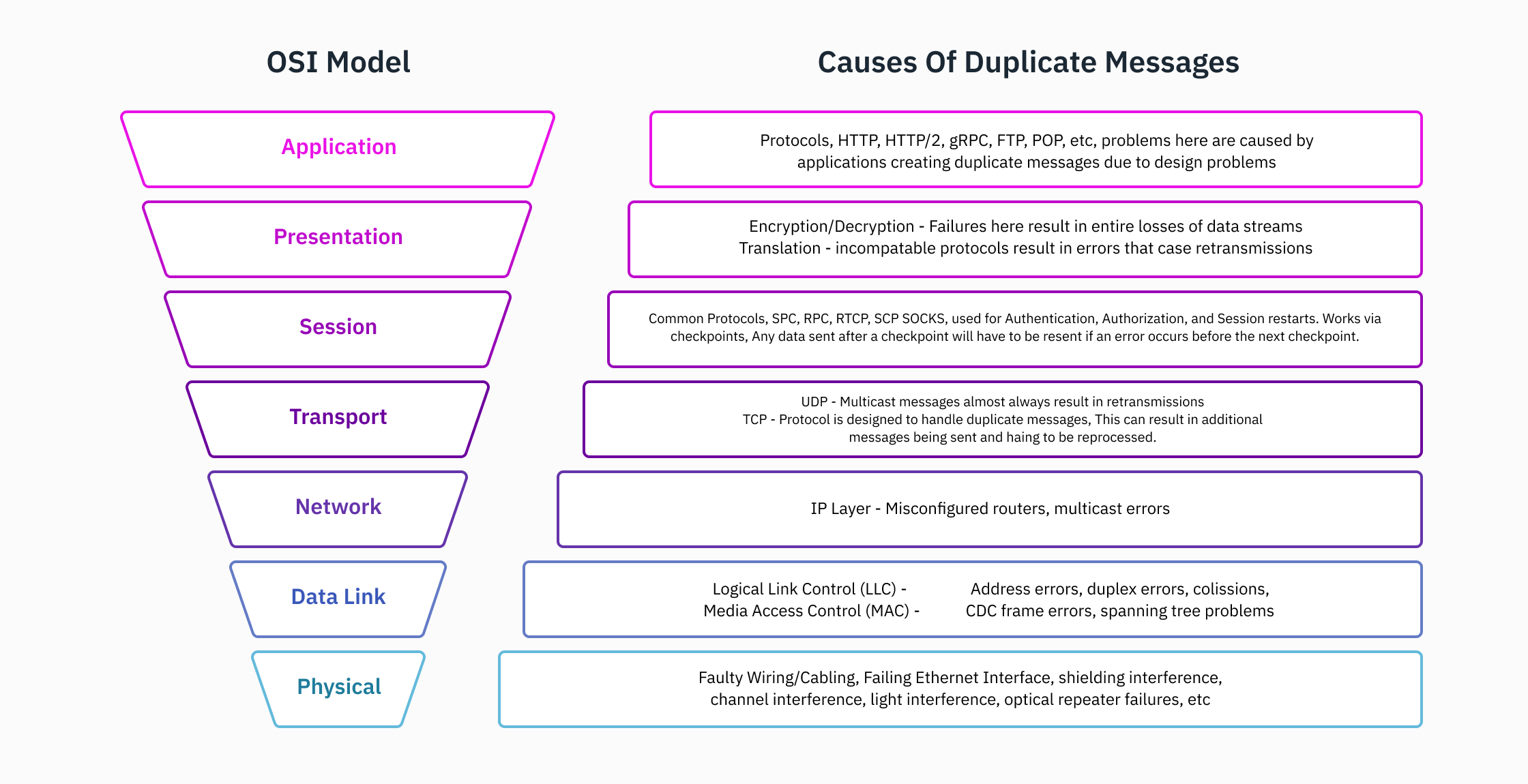

Duplication is usually attributable to any of the next:

- A change or router.

- A failing shopper or employee.

- An issue with gRPC connections.

- An excessive amount of visitors.

- A window dimension that’s too small for packets.

Notice: Take note this isn’t an exhaustive checklist.

This deduplication method requires in-depth data of the system community, in addition to the {hardware} and framework(s). It is extremely uncommon, even for a full-stack developer, to grasp the intricacies of all of the layers of the OSI mannequin and its implementation at an organization. The information storage, entry to knowledge pipelines, knowledge transformation, and software internals in a company of any substantial dimension are all past the scope of a single particular person. Because of this, there are specialised job titles in organizations. The flexibility to troubleshoot and establish all areas for duplicated messages requires in-depth data that’s merely unreasonable for a person to have, or perhaps a cross-functional group. Though the associated fee and experience necessities are very excessive, this method presents the best reward.

Cease Duplication Throughout ETL Jobs

Stream-processing ETL jobs is one other deduplication methodology. ETL jobs include extra overhead to handle, require extra computing prices, are potential failure factors with added complexity, and introduce latency to a system doubtlessly needing excessive throughput. This entails deduplication throughout knowledge stream consumption. The consumption retailers would possibly embrace making a compacted matter and/or introducing an ETL job with a typical batch processing instrument (e.g., Fivetran, Airflow, and Matillian).

To ensure that deduplication to be efficient utilizing the stream-processing ETL jobs methodology, it’s essential to make sure the ETL jobs run all through your system. Since knowledge duplication can apply wherever in a distributed system, guaranteeing architectures deduplicate in every single place messages are handed is paramount.

Stream processors can have an energetic processing window (open for a selected time) the place duplicate messages could be detected and compacted, and out-of-order messages could be reordered. Messages could be duplicated if they’re acquired exterior the processing window. Moreover, these stream processors should be maintained and may take appreciable compute assets and operational overhead.

Notice: Messages acquired exterior of the energetic processing window could be duplicated. We don’t suggest fixing deduplication points utilizing this methodology alone.

Cease Duplication at Question Time

One other deduplication methodology is to aim to unravel it at question time. Nonetheless, this will increase the complexity of your question, which is dangerous as a result of question errors may very well be generated.

For instance, in case your resolution tracks messages utilizing timestamps, and the duplicate messages are delayed by one second (as a substitute of fifty milliseconds), the timestamp on the duplicate messages won’t match your question syntax inflicting an error to be thrown.

How Rockset Solves Duplication

Rockset solves the duplication drawback by way of distinctive SQL-based transformations at ingest time.

Rockset is a Mutable Database

Rockset is a mutable database and permits for duplicate messages to be merged at ingest time. This method frees groups from the numerous cumbersome deduplication choices coated earlier.

Every doc has a singular identifier known as _id that acts like a major key. Customers can specify this identifier at ingest time (e.g. throughout updates) utilizing SQL-based transformations. When a brand new doc arrives with the identical _id, the duplicate message merges into the prevailing file. This presents customers a easy resolution to the duplication drawback.

While you deliver knowledge into Rockset, you possibly can construct your personal advanced _id key utilizing SQL transformations that:

- Determine a single key.

- Determine a composite key.

- Extract knowledge from a number of keys.

Rockset is totally mutable with out an energetic window. So long as you specify messages with _id or establish _id throughout the doc you might be updating or inserting, incoming duplicate messages will likely be deduplicated and merged collectively right into a single doc.

Rockset Allows Knowledge Mobility

Different analytics databases retailer knowledge in fastened knowledge buildings, which require compaction, resharding and rebalancing. Any time there’s a change to current knowledge, a serious overhaul of the storage construction is required. Many knowledge methods have energetic home windows to keep away from overhauls to the storage construction. Because of this, in the event you map _id to a file exterior the energetic database, that file will fail. In distinction, Rockset customers have numerous knowledge mobility and may replace any file in Rockset at any time.

A Buyer Win With Rockset

Whereas we have spoken concerning the operational challenges with knowledge deduplication in different methods, there’s additionally a compute-spend ingredient. Trying deduplication at question time, or utilizing ETL jobs could be computationally costly for a lot of use instances.

Rockset can deal with knowledge adjustments, and it helps inserts, updates and deletes that profit finish customers. Right here’s an nameless story of one of many customers that I’ve labored carefully with on their real-time analytics use case.

Buyer Background

A buyer had a large quantity of information adjustments that created duplicate entries inside their knowledge warehouse. Each database change resulted in a brand new file, though the shopper solely needed the present state of the info.

If the shopper needed to place this knowledge into an information warehouse that can’t map _id, the shopper would’ve needed to cycle by way of the a number of occasions saved of their database. This consists of working a base question adopted by extra occasion queries to get to the most recent worth state. This course of is extraordinarily computationally costly and time consuming.

Rockset’s Answer

Rockset supplied a extra environment friendly deduplication resolution to their drawback. Rockset maps _id so solely the most recent states of all information are saved, and all incoming occasions are deduplicated. Due to this fact the shopper solely wanted to question the most recent state. Due to this performance, Rockset enabled this buyer to scale back each the compute required, in addition to the question processing time — effectively delivering sub-second queries.

Rockset is the real-time analytics database within the cloud for contemporary knowledge groups. Get sooner analytics on more energizing knowledge, at decrease prices, by exploiting indexing over brute-force scanning.