Since 2016, OSS-Fuzz has been on the forefront of automated vulnerability discovery for open supply initiatives. Vulnerability discovery is a vital a part of holding software program provide chains safe, so our workforce is continually working to enhance OSS-Fuzz. For the previous couple of months, we’ve examined whether or not we may increase OSS-Fuzz’s efficiency utilizing Google’s Giant Language Fashions (LLM).

This weblog submit shares our expertise of efficiently making use of the generative energy of LLMs to enhance the automated vulnerability detection method often called fuzz testing (“fuzzing”). By utilizing LLMs, we’re in a position to enhance the code protection for essential initiatives utilizing our OSS-Fuzz service with out manually writing extra code. Utilizing LLMs is a promising new technique to scale safety enhancements throughout the over 1,000 initiatives at present fuzzed by OSS-Fuzz and to take away limitations to future initiatives adopting fuzzing.

LLM-aided fuzzing

We created the OSS-Fuzz service to assist open supply builders discover bugs of their code at scale—particularly bugs that point out safety vulnerabilities. After greater than six years of working OSS-Fuzz, we now assist over 1,000 open supply initiatives with steady fuzzing, freed from cost. Because the Heartbleed vulnerability confirmed us, bugs that may very well be simply discovered with automated fuzzing can have devastating results. For many open supply builders, organising their very own fuzzing answer may value time and assets. With OSS-Fuzz, builders are in a position to combine their mission totally free, automated bug discovery at scale.

Since 2016, we’ve discovered and verified a repair for over 10,000 safety vulnerabilities. We additionally consider that OSS-Fuzz may possible discover much more bugs with elevated code protection. The fuzzing service covers solely round 30% of an open supply mission’s code on common, which means that a big portion of our customers’ code stays untouched by fuzzing. Current analysis means that the simplest technique to enhance that is by including extra fuzz targets for each mission—one of many few elements of the fuzzing workflow that isn’t but automated.

When an open supply mission onboards to OSS-Fuzz, maintainers make an preliminary time funding to combine their initiatives into the infrastructure after which add fuzz targets. The fuzz targets are features that use randomized enter to check the focused code. Writing fuzz targets is a project-specific and handbook course of that’s much like writing unit exams. The continuing safety advantages from fuzzing make this preliminary funding of time price it for maintainers, however writing a complete set of fuzz targets is an powerful expectation for mission maintainers, who are sometimes volunteers.

However what if LLMs may write extra fuzz targets for maintainers?

“Hey LLM, fuzz this mission for me”

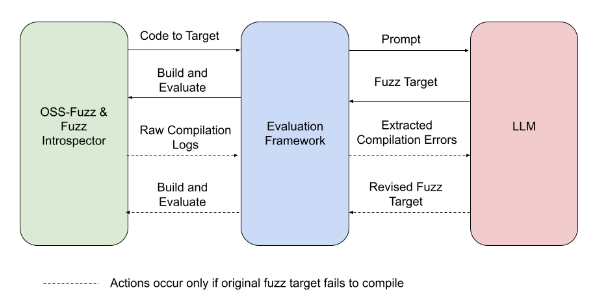

To find whether or not an LLM may efficiently write new fuzz targets, we constructed an analysis framework that connects OSS-Fuzz to the LLM, conducts the experiment, and evaluates the outcomes. The steps appear like this:

-

OSS-Fuzz’s Fuzz Introspector device identifies an under-fuzzed, high-potential portion of the pattern mission’s code and passes the code to the analysis framework.

-

The analysis framework creates a immediate that the LLM will use to write down the brand new fuzz goal. The immediate consists of project-specific data.

-

The analysis framework takes the fuzz goal generated by the LLM and runs the brand new goal.

-

The analysis framework observes the run for any change in code protection.

-

Within the occasion that the fuzz goal fails to compile, the analysis framework prompts the LLM to write down a revised fuzz goal that addresses the compilation errors.

Experiment overview: The experiment pictured above is a totally automated course of, from figuring out goal code to evaluating the change in code protection.

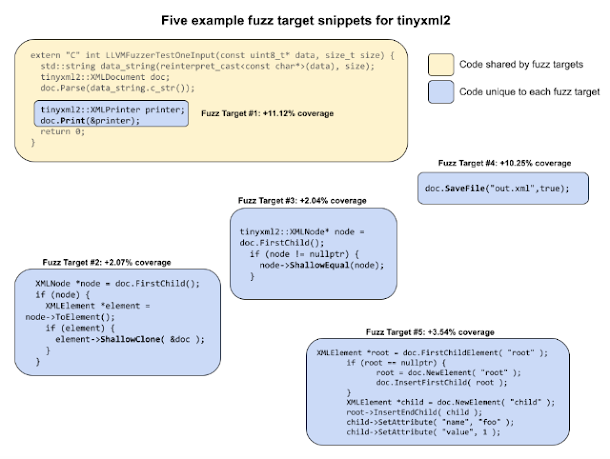

At first, the code generated from our prompts wouldn’t compile, nevertheless after a number of rounds of immediate engineering and making an attempt out the brand new fuzz targets, we noticed initiatives achieve between 1.5% and 31% code protection. One among our pattern initiatives, tinyxml2, went from 38% line protection to 69% with none interventions from our workforce. The case of tinyxml2 taught us: when LLM-generated fuzz targets are added, tinyxml2 has the vast majority of its code coated.

Instance fuzz targets for tinyxml2: Every of the 5 fuzz targets proven is related to a distinct a part of the code and provides to the general protection enchancment.

To duplicate tinyxml2’s outcomes manually would have required no less than a day’s price of labor—which might imply a number of years of labor to manually cowl all OSS-Fuzz initiatives. Given tinyxml2’s promising outcomes, we need to implement them in manufacturing and to increase related, automated protection to different OSS-Fuzz initiatives.

Moreover, within the OpenSSL mission, our LLM was in a position to robotically generate a working goal that rediscovered CVE-2022-3602, which was in an space of code that beforehand didn’t have fuzzing protection. Although this isn’t a brand new vulnerability, it means that as code protection will increase, we are going to discover extra vulnerabilities which are at present missed by fuzzing.

Study extra about our outcomes by way of our instance prompts and outputs or by way of our experiment report.

The aim: totally automated fuzzing

Within the subsequent few months, we’ll open supply our analysis framework to permit researchers to check their very own automated fuzz goal technology. We’ll proceed to optimize our use of LLMs for fuzzing goal technology by way of extra mannequin finetuning, immediate engineering, and enhancements to our infrastructure. We’re additionally collaborating carefully with the Assured OSS workforce on this analysis as a way to safe much more open supply software program utilized by Google Cloud prospects.

Our long run targets embody:

-

Including LLM fuzz goal technology as a totally built-in function in OSS-Fuzz, with steady technology of latest targets for OSS-fuzz initiatives and nil handbook involvement.

-

Extending assist from C/C++ initiatives to extra language ecosystems, like Python and Java.

-

Automating the method of onboarding a mission into OSS-Fuzz to remove any want to write down even preliminary fuzz targets.

We’re working in direction of a way forward for customized vulnerability detection with little handbook effort from builders. With the addition of LLM generated fuzz targets, OSS-Fuzz will help enhance open supply safety for everybody.