If you happen to’re performing internet requests with Spring Boot’s WebClient you maybe, identical to us, learn that defining the URL of your request ought to be achieved utilizing a URI builder (e.g. Spring 5 WebClient):

webClient .get()

.uri(uriBuilder -> uriBuilder.path("/v2/merchandise/{id}")

.construct(productId))If that’s the case, we suggest that you just ignore what you learn (until searching hard-to-find reminiscence leaks is your passion) and use the next for developing a URI as a substitute:

webClient .get() .uri("/v2/merchandise/{id}", productId))On this weblog submit we’ll clarify the way to keep away from reminiscence leaks with Spring Boot WebClient and why it’s higher to keep away from the previous sample, utilizing our private expertise as motivation.

How did we uncover this reminiscence leak?

Some time again we upgraded our software to make use of the most recent model of the Axle framework. Axle is the bol.com framework for constructing Java functions, like (REST) providers and frontend functions. It closely depends on Spring Boot and this improve additionally concerned updating from Spring Boot model 2.3.12 to model 2.4.11.

When working our scheduled efficiency assessments, all the things seemed effective. Most of our software’s endpoints nonetheless supplied response instances of below 5 milliseconds. Nonetheless, because the efficiency check progressed, we observed our software’s response instances growing as much as 20 milliseconds, and after an extended working load check over the weekend, issues received quite a bit worse. The response instances skyrocketed to seconds – not good.

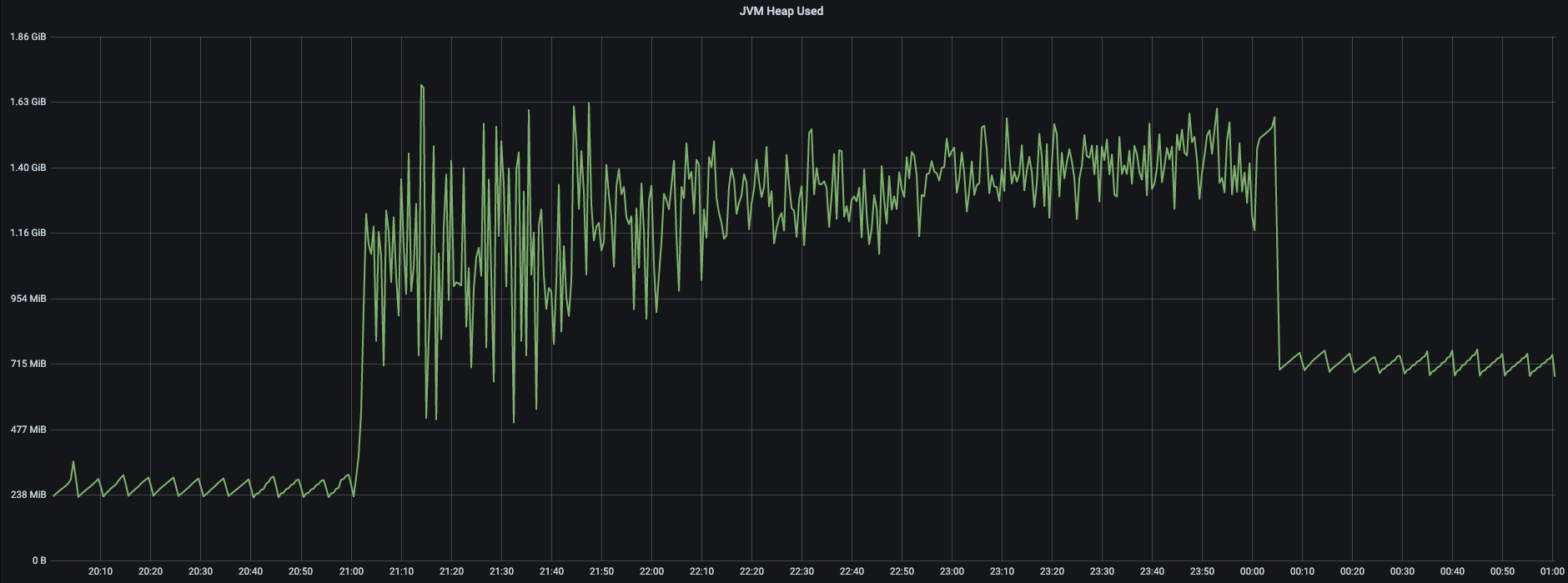

After an extended stare down contest with our Grafana dashboards, which give insights into our software’s CPU, thread and reminiscence utilization, this reminiscence utilization sample caught our eye:

This graph reveals the JVM heap dimension earlier than, throughout, and after a efficiency check that ran from 21:00 to 0:00. Throughout the efficiency check, the appliance created threads and objects to deal with all incoming requests. So, the capricious line displaying the reminiscence utilization throughout this era is precisely what we’d anticipate. Nonetheless, when the mud from the efficiency check settles down, we’d anticipate the reminiscence to additionally settle all the way down to the identical degree as earlier than, however it’s really larger. Does anybody else odor a reminiscence leak?

Time to name within the MAT (Eclipse Reminiscence Analyzer Device) to search out out what causes this reminiscence leak.

What precipitated this reminiscence leak?

To troubleshoot this reminiscence leak we:

- Restarted the appliance.

- Carried out a heap dump (a snapshot of all of the objects which are in reminiscence within the JVM at a sure second).

- Triggered a efficiency check.

- Carried out one other heap dump as soon as the check finishes.

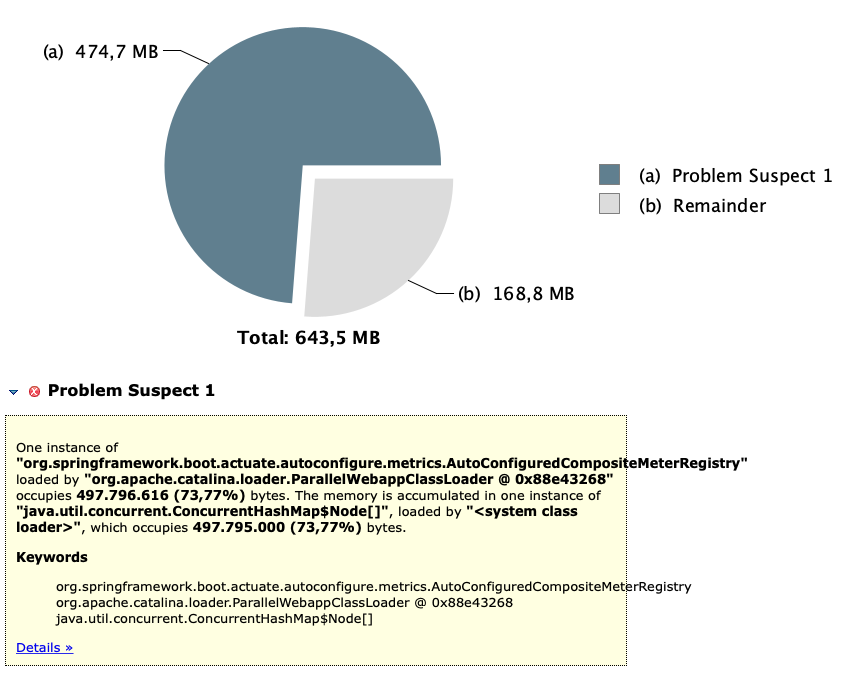

This allowed us to make use of MAT’s superior function to detect the leak suspects by evaluating two heap dumps taken a while aside. However we didn’t need to go that far, since, the heap dump from after the check was sufficient for MAT to search out one thing suspicious:

Right here MAT tells us that one occasion of Spring Boot’s AutoConfiguredCompositeMeterRegistry occupies virtually 500MB, which is 74% of the entire used heap dimension. It additionally tells us that it has a (concurrent) hashmap that’s accountable for this. We’re virtually there!

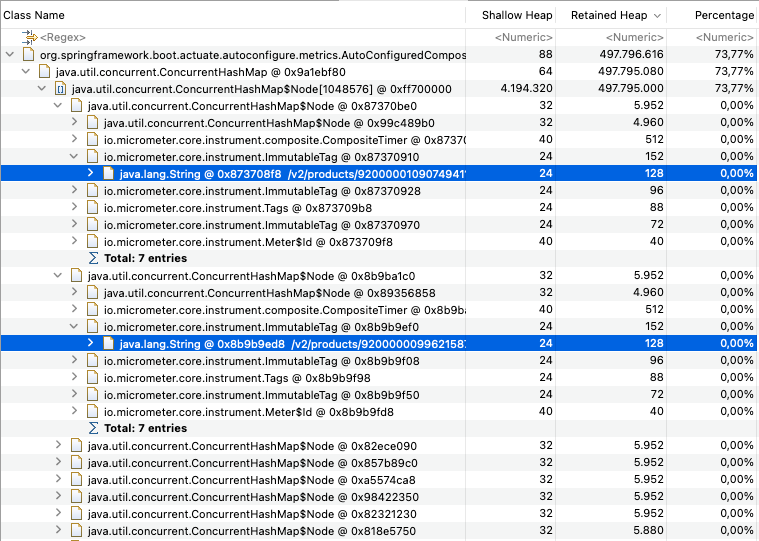

With MAT’s dominator tree function, we will record the most important objects and see what they saved alive – That sounds helpful, so let’s use it to have a peek at what’s inside this humongous hashmap:

Utilizing the dominator tree we had been capable of simply flick thru the hashmap’s contents. Within the above image we opened two hashmap nodes. Right here we see loads of micrometer timers tagged with “v2/merchandise/…” and a product id. Hmm, the place have we seen that earlier than?

What does WebClient need to do with this?

So, it’s Spring Boot’s metrics which are accountable for this reminiscence leak, however what does WebClienthave to do with this? To seek out that out you actually have to know what causes Spring’s metrics to retailer all these timers.

Inspecting the implementation of AutoConfiguredCompositeMeterRegistrywe see that it shops the metrics in a hashmap named meterMap. So, let’s put a well-placed breakpoint on the spot the place new entries are added and set off our suspicious name our WebClientperforms to the “v2/product/{productId}” endpoint.

We run the appliance once more and … Gotcha! For every name the WebClientmakes to the “v2/product/{productId}” endpoint, we noticed Spring creating a brand new Timerfor every distinctive occasion of product identifier. Every such timer is then saved within the AutoConfiguredCompositeMeterRegistry bean. That explains why we see so many timers with tags like these:

/v2/merchandise/9200000109074941 /v2/merchandise/9200000099621587

How are you going to repair this reminiscence leak?

Earlier than we establish when this reminiscence leak would possibly have an effect on you, let’s first clarify how one would repair it. We’ve talked about within the introduction, that by merely not utilizing a URI builder to assemble WebClient URLs, you’ll be able to keep away from this reminiscence leak. Now we’ll clarify why it really works.

After just a little on-line analysis we got here throughout this submit (https://rieckpil.de/expose-metrics-of-spring-webclient-using-spring-boot-actuator/) of Philip Riecks, through which he explains:

“As we normally need the templated URI string like “/todos/{id}” for reporting and never a number of metrics e.g. “/todos/1337” or “/todos/42″ . The WebClient presents a number of methods to assemble the URI […], which you’ll all use, besides one.”

And that methodology is utilizing the URI builder, coincidentally the one we’re utilizing:

webClient .get()

.uri(uriBuilder -> uriBuilder.path("/v2/merchandise/{id}")

.construct(productId))Riecks continues in his submit that “[w]ith this resolution the WebClient doesn’t know the URI template origin, because it will get handed the ultimate URI.”

So the answer is so simple as utilizing a kind of different strategies to cross within the URI, such that the WebClient WebClient will get handed the templated – and never the ultimate – URI:

webClient .get() .uri("/v2/merchandise/{id}", productId))Certainly, after we assemble the URI like that, the reminiscence leak disappears. Additionally, the response instances are again to regular once more.

When would possibly the reminiscence leak have an effect on you? – a easy reply

Do you must fear about this reminiscence leak? Nicely, let’s begin with the obvious case. In case your software exposes its HTTP consumer metrics, and makes use of a way that takes a URI builder to set a templated URI onto a WebClient, you must positively be frightened.

You may simply verify in case your software exposes http consumer metrics in two alternative ways:

- Inspecting the “/actuator/metrics/http.consumer.requests” endpoint of your Spring Boot software after your software made at the least one exterior name. A 404 means your software doesn’t expose them.

- Checking if the worth of the appliance property administration.metrics.allow.http.consumer.metrics is about to true, through which case your software does expose them.

Nonetheless, this doesn’t imply that you just’re secure when you’re not exposing the HTTP consumer metrics. We’ve been passing templated URIs to the WebClient utilizing a builder for ages, and we’ve by no means uncovered our HTTP consumer metrics. But, abruptly this reminiscence leak reared its ugly head after an software improve.

So, would possibly this reminiscence leak have an effect on you then? Simply don’t use URI builders along with your WebClient and you ought to be protected towards this potential reminiscence leak. That might be the straightforward reply. You do not take easy solutions? Honest sufficient, learn on to search out out what actually precipitated this for us.

When would possibly the reminiscence leak have an effect on you? – a extra full reply

So, how did a easy software improve trigger this reminiscence leak to rear its ugly head? Evidently, the addition of a transitive Prometheus (https://prometheus.io/) dependency – an open supply monitoring and alerting framework – precipitated the reminiscence leak in our explicit case. To grasp why, let’s return to the state of affairs earlier than we added Prometheus.

Earlier than we dragged within the Prometheus library, we pushed our metrics to statsd (https://github.com/statsd/statsd) – a community daemon that listens to and aggregates software metrics despatched over UDP or TCP. The StatsdMeterRegistry that’s a part of the Spring framework is accountable for pushing metrics to statsd. The StatsdMeterRegistry solely pushes metrics that aren’t filtered out by a MeterFilter. The administration.metrics.allow.http.consumer.metrics property is an instance of such a MeterFilter. In different phrases, if

administration.metrics.allow.http.consumer.metrics = false the StatsdMeterRegistry will not push any HTTP consumer metric to statsd and will not retailer these metrics in reminiscence both. Thus far, so good.

By including the transitive Prometheus dependency, we added one more meter registry to our software, the PrometheusMeterRegistry. When there may be multiple meter registry to show metrics to, Spring instantiates a CompositeMeterRegistry bean. This bean retains monitor of all particular person meter registries, collects all metrics and forwards them to all of the delegates it holds. It’s the addition of this bean that precipitated the difficulty.

The problem is that MeterFilter cases aren’t utilized to the CompositeMeterRegistry, however solely to MeterRegistry cases within the CompositeMeterRegistry (See this commit for extra data.) That explains why theAutoConfiguredCompositeMeterRegistryaccumulates all of the HTTP consumer metrics in reminiscence, even after we explicitly set administration.metrics.allow.http.consumer.metricsto false.

Nonetheless confused? No worries, simply don’t use URI builders along with your WebClient and you ought to be protected towards this reminiscence leak.

Conclusion

On this weblog submit we defined that this strategy of defining URLs of your request with Spring Boot’s WebClient is greatest averted:

webClient .get()

.uri(uriBuilder -> uriBuilder.path("/v2/merchandise/{id}")

.construct(productId))We confirmed that this strategy – which you may need come throughout in some on-line tutorial – is liable to reminiscence leaks. We elaborated on why these reminiscence leaks occur and that they are often averted by defining parameterised request URLs like this:

webClient .get() .uri("/v2/merchandise/{id}", productId))