The inaccuracy and extreme optimism of value estimates are usually cited as dominant elements in DoD value overruns. Causal studying can be utilized to establish particular causal elements which might be most chargeable for escalating prices. To comprise prices, it’s important to grasp the elements that drive prices and which of them could be managed. Though we could perceive the relationships between sure elements, we don’t but separate the causal influences from non-causal statistical correlations.

Causal fashions ought to be superior to conventional statistical fashions for value estimation: By figuring out true causal elements versus statistical correlations, value fashions ought to be extra relevant in new contexts the place the correlations may not maintain. Extra importantly, proactive management of challenge and job outcomes could be achieved by immediately intervening on the causes of those outcomes. Till the event of computationally environment friendly causal-discovery algorithms, we didn’t have a strategy to get hold of or validate causal fashions from primarily observational knowledge—randomized management trials in programs and software program engineering analysis are so impractical that they’re almost inconceivable.

On this weblog submit, I describe the SEI Software program Price Prediction and Management (abbreviated as SCOPE) challenge, the place we apply causal-modeling algorithms and instruments to a big quantity of challenge knowledge to establish, measure, and check causality. The submit builds on analysis undertaken with Invoice Nichols and Anandi Hira on the SEI, and my former colleagues David Zubrow, Robert Stoddard, and Sarah Sheard. We sought to establish some causes of challenge outcomes, comparable to value and schedule overruns, in order that the price of buying and working software-reliant programs and their rising functionality is predictable and controllable.

We’re creating causal fashions, together with structural equation fashions (SEMs), that present a foundation for

- calculating the trouble, schedule, and high quality outcomes of software program tasks underneath completely different eventualities (e.g., Waterfall versus Agile)

- estimating the outcomes of interventions utilized to a challenge in response to a change in necessities (e.g., a change in mission) or to assist carry the challenge again on observe towards reaching value, schedule, and technical necessities.

An instantaneous advantage of our work is the identification of causal elements that present a foundation for controlling program prices. A long term profit is the flexibility to make use of causal fashions to barter software program contracts, design coverage, and incentives, and inform could-/should-cost and affordability efforts.

Why Causal Studying?

To systematically scale back prices, we usually should establish and contemplate the a number of causes of an consequence and thoroughly relate them to one another. A robust correlation between an element X and price could stem largely from a typical explanation for each X and price. If we fail to watch and modify for that widespread trigger, we could incorrectly attribute X as a major explanation for value and expend power (and prices), fruitlessly intervening on X anticipating value to enhance.

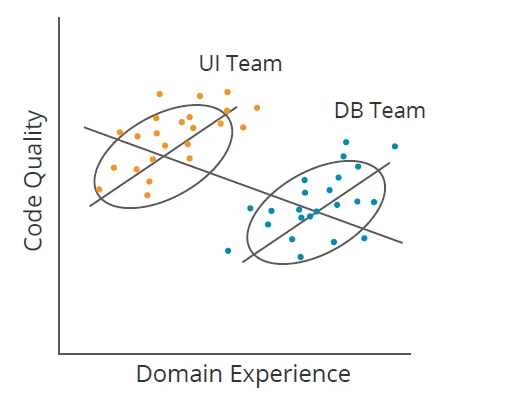

One other problem to correlations is illustrated by Simpson’s Paradox. For instance, in Determine 1 beneath, if a program supervisor didn’t phase knowledge by group (Consumer Interface [UI] and Database [DB]), they could conclude that rising area expertise reduces code high quality (downward line); nonetheless, inside every group, the alternative is true (two upward traces). Causal studying identifies when elements like group membership clarify away (or mediate) correlations. It really works for far more difficult datasets too.

Determine 1: Illustration of Simpson’s Paradox

Causal studying is a type of machine studying that focuses on causal inference. Machine studying produces a mannequin that can be utilized for prediction from a dataset. Causal studying differs from machine studying in its deal with modeling the data-generation course of. It solutions questions comparable to

- How did the information come to be the way in which it’s?

- What knowledge is driving which outcomes?

Of explicit curiosity in causal studying is the excellence between conditional dependence and conditional independence. For instance, if I do know what the temperature is outdoors, I can discover that the variety of shark assaults and ice cream gross sales are unbiased of one another (conditional independence). If I do know {that a} automotive received’t begin, I can discover that the situation of the fuel tank and battery are depending on one another (conditional dependence) as a result of if I do know one in every of these is ok, the opposite will not be more likely to be fantastic.

Programs and software program engineering researchers and practitioners who search to optimize follow usually espouse theories about how finest to conduct system and software program improvement and sustainment. Causal studying can assist check the validity of such theories. Our work seeks to evaluate the empirical basis for heuristics and guidelines of thumb utilized in managing packages, planning packages, and estimating prices.

A lot prior work has centered on utilizing regression evaluation and different strategies. Nonetheless, regression doesn’t distinguish between causality and correlation, so appearing on the outcomes of a regression evaluation may fail to affect outcomes within the desired manner. By deriving usable data from observational knowledge, we generate actionable data and apply it to supply the next degree of confidence that interventions or corrective actions will obtain desired outcomes.

The next examples from our analysis spotlight the significance and problem of figuring out real causal elements to elucidate phenomena.

Opposite and Stunning Outcomes

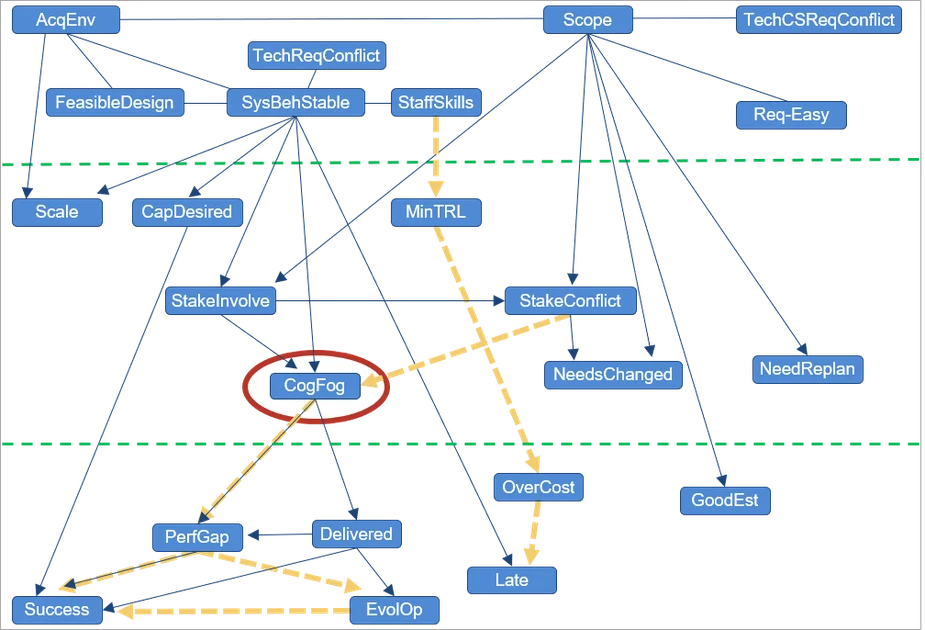

Determine 2: Complexity and Program Success

Determine 2 reveals a dataset developed by Sarah Sheard that comprised roughly 40 measures of complexity (elements), looking for to establish what kinds of complexity drive success versus failure in DoD packages (solely these elements discovered to be causally ancestral to program success are proven). Though many several types of complexity have an effect on program success, the one constant driver of success or failure that we repeatedly discovered is cognitive fog, which entails the lack of mental features, comparable to pondering, remembering, and reasoning, with enough severity to intrude with every day functioning.

Cognitive fog is a state that groups ceaselessly expertise when having to persistently take care of conflicting knowledge or difficult conditions. Stakeholder relationships, the character of stakeholder involvement, and stakeholder battle all have an effect on cognitive fog: The connection is one in every of direct causality (relative to the elements included within the dataset), represented in Determine 2 by edges with arrowheads. This relationship signifies that if all different elements are mounted—and we alter solely the quantity of stakeholder involvement or battle—the quantity of cognitive fog adjustments (and never the opposite manner round).

Sheard’s work recognized what kinds of program complexity drive or impede program success. The eight elements within the prime horizontal phase of Determine 2 are elements obtainable in the beginning of this system. The underside seven are elements of program success. The center eight are elements obtainable throughout program execution. Sheard discovered three elements within the higher or center bands that had promise for intervention to enhance program success. We utilized causal discovery to the identical dataset and found that one in every of Sheard’s elements, variety of exhausting necessities, appeared to haven’t any causal impact on program success (and thus doesn’t seem within the determine). Cognitive fog, nonetheless, is a dominating issue. Whereas stakeholder relationships additionally play a job, all these arrows undergo cognitive fog. Clearly, the advice for a program supervisor primarily based on this dataset is that sustaining wholesome stakeholder relationships can be sure that packages don’t descend right into a state of cognitive fog.

Direct Causes of Software program Price and Schedule

Readers acquainted with the Constructive Price Mannequin (COCOMO) or Constructive Programs Engineering Price Mannequin (COSYSMO) could surprise what these fashions would have seemed like had causal studying been used of their improvement, whereas sticking with the identical acquainted equation construction utilized by these fashions. We lately labored with a few of the researchers chargeable for creating and sustaining these fashions [formerly, members of the late Barry Boehm‘s group at the University of Southern California (USC)]. We coached these researchers on tips on how to apply causal discovery to their proprietary datasets to achieve insights into what drives software program prices.

From among the many greater than 40 elements that COCOMO and COSYSMO describe, these are those that we discovered to be direct drivers of value and schedule:

COCOMO II effort drivers:

- measurement (software program traces of code, SLOC)

- group cohesion

- platform volatility

- reliability

- storage constraints

- time constraints

- product complexity

- course of maturity

- threat and structure decision

COCOMO II schedule drivers

- measurement (SLOC)

- platform expertise

- schedule constraint

- effort

COSYSMO 3.0 effort drivers

- measurement

- level-of-service necessities

In an effort to recreate value fashions within the fashion of COCOMO and COSYSMO, however primarily based on causal relationships, we used a device known as Tetrad to derive graphs from the datasets after which instantiate a number of easy mini-cost-estimation fashions. Tetrad is a set of instruments utilized by researchers to find, parameterize, estimate, visualize, check, and predict from causal construction. We carried out the next six steps to generate the mini-models, which produce believable value estimates in our testing:

- Disallow value drivers to have direct causal relationships with each other. (Such independence of value drivers is a central design precept for COCOMO and COSYSMO.)

- As a substitute of together with every scale issue as a variable (as we do in effort

multipliers), change them with a brand new variable: scale issue instances LogSize. - Apply causal discovery to the revised dataset to acquire a causal graph.

- Use Tetrad mannequin estimation to acquire parent-child edge coefficients.

- Elevate the equations from the ensuing graph to type the mini-model, reapplying estimation to correctly decide the intercept.

- Consider the match of the ensuing mannequin and its predictability.

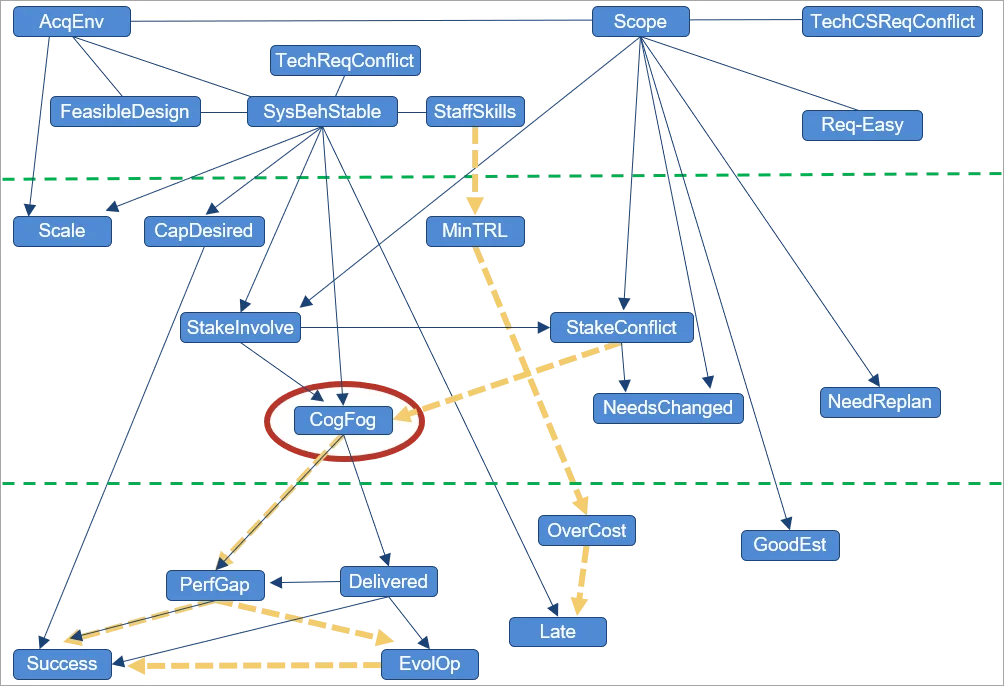

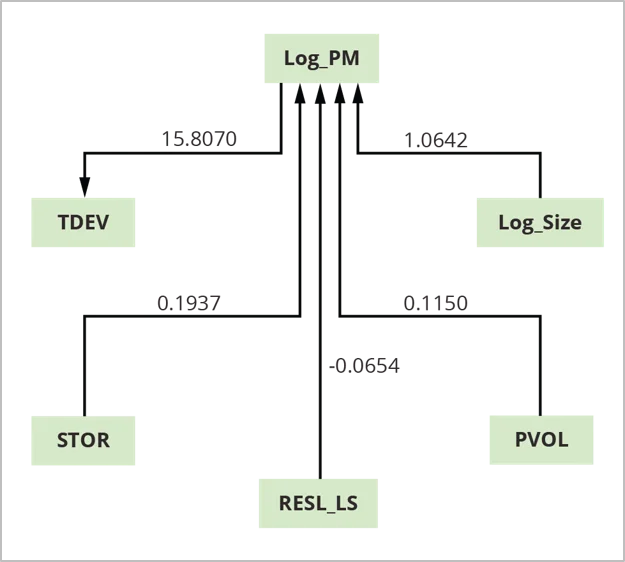

Determine 3: COCOMO II Mini-Price Estimation Mannequin

The benefit of the mini-model is that it identifies which elements, amongst many, usually tend to drive value and schedule. In line with this evaluation utilizing COCOMO II calibration knowledge, 4 elements—log measurement (Log_Size), platform volatility (PVOL), dangers from incomplete structure instances log measurement (RESL_LS), and reminiscence storage (STOR)—are direct causes (drivers) of challenge effort (Log_PM). Log_PM is a driver of the time to develop (TDEV).

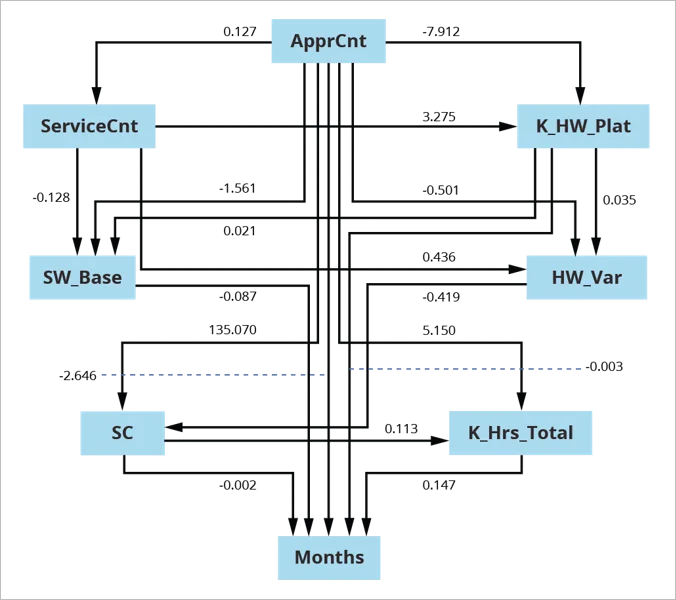

We carried out the same evaluation of systems-engineering effort that confirmed the same relationship with schedules and time to develop. We recognized six elements which have direct causal impact on effort. Outcomes indicated that if we wished to alter effort, we might be higher off altering one in every of these variables or one in every of their direct causes. If we have been to intervene on every other variable, the impact on effort would probably be partially blocked or may degrade system functionality or high quality. The causal graph in Determine 4 helps to reveal the have to be cautious about intervening on a challenge. These outcomes are additionally generalizable and assist to establish the direct causal relationships that persist past the bounds of a selected dataset or inhabitants that we pattern.

Consensus Graph for U.S. Military Software program Sustainment

Determine 4: Consensus Graph for U.S. Military Software program Sustainment

On this instance, we segmented a U.S. Military sustainment dataset into [superdomain, acquisition category (ACAT) level] pairs, leading to 5 units of knowledge to look and estimate. Segmenting on this manner addressed excessive fan-out for widespread causes, which may result in constructions typical of Simpson’s Paradox. With out segmenting by [superdomain, ACAT-level] pairs, graphs are completely different than after we phase the information. We constructed the consensus graph proven in Determine 4 above from the ensuing 5 searched and fitted fashions.

For consensus estimation, we pooled the information from particular person searches with knowledge that was beforehand excluded due to lacking values. We used the ensuing 337 releases to estimate the consensus graph utilizing Mplus with Bootstrap in estimation.

This mannequin is a direct out-of-the-box estimation, reaching good mannequin match on the primary attempt.

Our Answer for Making use of Causal Studying to Software program Improvement

We’re making use of causal studying of the sort proven within the examples above to our datasets and people of our collaborators to determine key trigger–impact relationships amongst challenge elements and outcomes. We’re making use of causal-discovery algorithms and knowledge evaluation to those cost-related datasets. Our strategy to causal inference is principled (i.e., no cherry selecting) and sturdy (to outliers). This strategy is surprisingly helpful for small samples, when the variety of circumstances is fewer than 5 to 10 instances the variety of variables.

If the datasets are proprietary, the SEI trains collaborators to carry out causal searches on their very own as we did with USC. The SEI then wants data solely about what dataset and search parameters have been used in addition to the ensuing causal graph.

Our total technical strategy subsequently consists of 4 threads:

- studying in regards to the algorithms and their completely different settings

- encouraging the creators of those algorithms (Carnegie Mellon Division of Philosophy) to create new algorithms for analyzing the noisy and small datasets extra typical of software program engineering, particularly throughout the DoD

- persevering with to work with our collaborators on the College of Southern California to achieve additional insights into the driving elements that have an effect on software program prices

- presenting preliminary outcomes and thereby soliciting value datasets from value estimators throughout and from the DoD specifically

Accelerating Progress in Software program Engineering with Causal Studying

Understanding which elements drive particular program outcomes is important to supply larger high quality and safe software program in a well timed and inexpensive method. Causal fashions provide higher perception for program management than fashions primarily based on correlation. They keep away from the hazard of measuring the fallacious issues and appearing on the fallacious indicators.

Progress in software program engineering could be accelerated by utilizing causal studying; figuring out deliberate programs of motion, comparable to programmatic choices and coverage formulation; and focusing measurement on elements recognized as causally associated to outcomes of curiosity.

In coming years, we are going to

- examine determinants and dimensions of high quality

- quantify the energy of causal relationships (known as causal estimation)

- search replication with different datasets and proceed to refine our methodology

- combine the outcomes right into a unified set of decision-making ideas

- use causal studying and different statistical analyses to provide extra artifacts to make Quantifying Uncertainty in Early Lifecycle Price Estimation (QUELCE) workshops simpler

We’re satisfied that causal studying will speed up and provide promise in software program engineering analysis throughout many matters. By confirming causality or debunking typical knowledge primarily based on correlation, we hope to tell when stakeholders ought to act. We imagine that always the fallacious issues are being measured and actions are being taken on fallacious indicators (i.e., primarily on the premise of perceived or precise correlation).

There’s vital promise in persevering with to have a look at high quality and safety outcomes. We additionally will add causal estimation into our mixture of analytical approaches and use extra equipment to quantify these causal inferences. For this we want your assist, entry to knowledge, and collaborators who will present this knowledge, study this technique, and conduct it on their very own knowledge. If you wish to assist, please contact us.