SkyHive is an end-to-end reskilling platform that automates expertise evaluation, identifies future expertise wants, and fills ability gaps by means of focused studying suggestions and job alternatives. We work with leaders within the house together with Accenture and Workday, and have been acknowledged as a cool vendor in human capital administration by Gartner.

We’ve already constructed a Labor Market Intelligence database that shops:

- Profiles of 800 million (anonymized) employees and 40 million firms

- 1.6 billion job descriptions from 150 nations

- 3 trillion distinctive ability combos required for present and future jobs

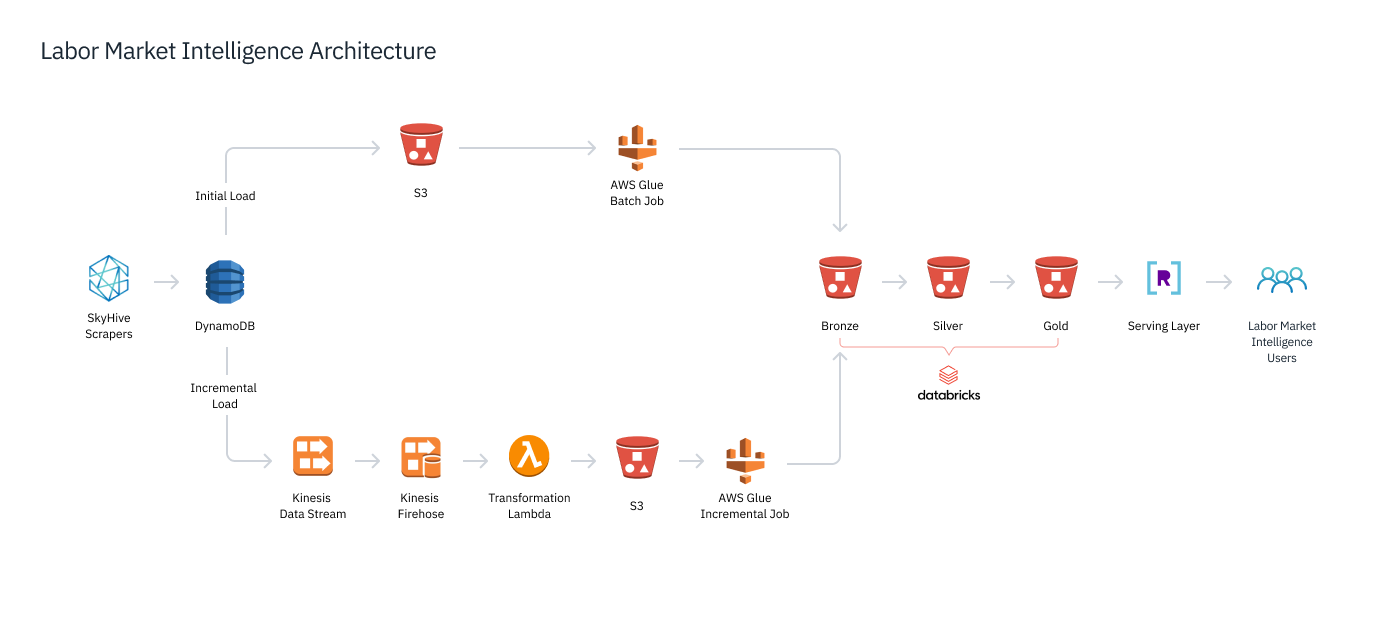

Our database ingests 16 TB of information every single day from job postings scraped by our net crawlers to paid streaming information feeds. And we now have completed a variety of advanced analytics and machine studying to glean insights into international job traits as we speak and tomorrow.

Because of our ahead-of-the-curve expertise, good word-of-mouth and companions like Accenture, we’re rising quick, including 2-4 company clients every single day.

Pushed by Knowledge and Analytics

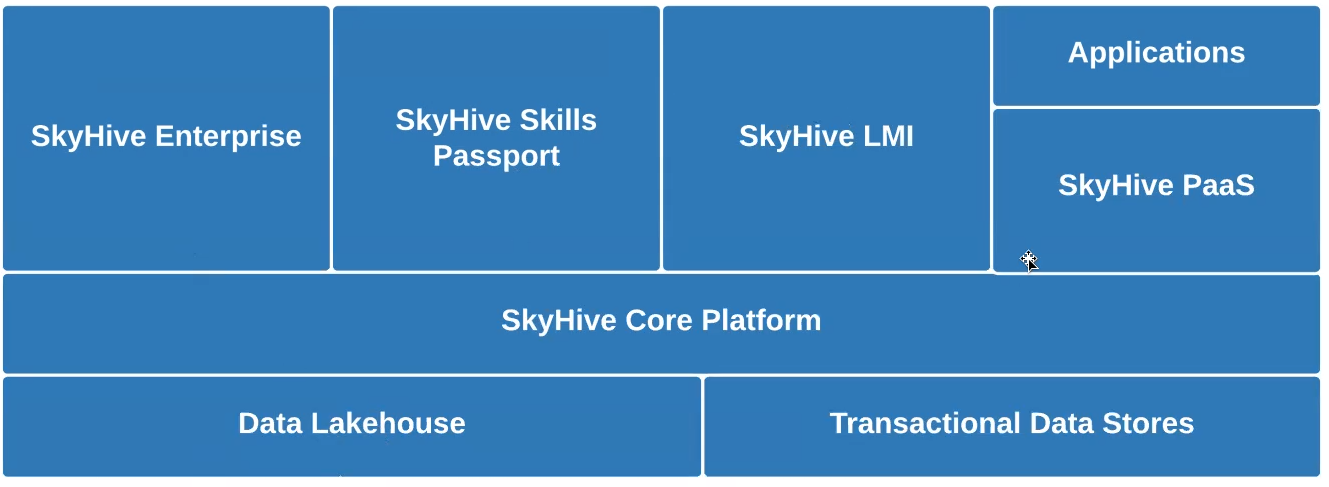

Like Uber, Airbnb, Netflix, and others, we’re disrupting an business – the worldwide HR/HCM business, on this case – with data-driven providers that embody:

- SkyHive Talent Passport – a web-based service educating employees on the job expertise they should construct their careers, and assets on the right way to get them.

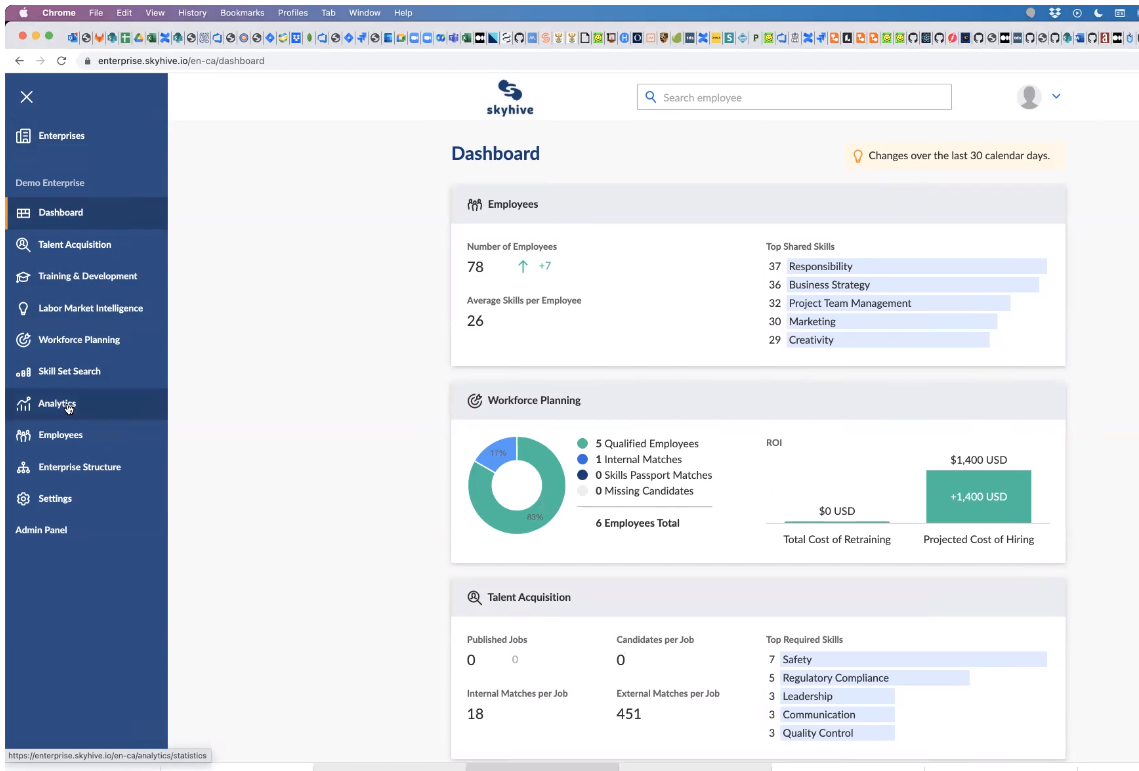

- SkyHive Enterprise – a paid dashboard (beneath) for executives and HR to research and drill into information on a) their staff’ aggregated job expertise, b) what expertise firms want to reach the long run; and c) the abilities gaps.

- Platform-as-a-Service through APIs – a paid service permitting companies to faucet into deeper insights, reminiscent of comparisons with rivals, and recruiting suggestions to fill expertise gaps.

Challenges with MongoDB for Analytical Queries

16 TB of uncooked textual content information from our net crawlers and different information feeds is dumped each day into our S3 information lake. That information was processed after which loaded into our analytics and serving database, MongoDB.

MongoDB question efficiency was too gradual to assist advanced analytics involving information throughout jobs, resumes, programs and totally different geographics, particularly when question patterns weren’t outlined forward of time. This made multidimensional queries and joins gradual and expensive, making it unattainable to supply the interactive efficiency our customers required.

For instance, I had one massive pharmaceutical buyer ask if it might be attainable to seek out the entire information scientists on the planet with a scientific trials background and three+ years of pharmaceutical expertise. It might have been an extremely costly operation, however after all the client was in search of rapid outcomes.

When the client requested if we may increase the search to non-English talking nations, I needed to clarify it was past the product’s present capabilities, as we had issues normalizing information throughout totally different languages with MongoDB.

There have been additionally limitations on payload sizes in MongoDB, in addition to different unusual hardcoded quirks. As an example, we couldn’t question Nice Britain as a rustic.

All in all, we had vital challenges with question latency and getting our information into MongoDB, and we knew we wanted to maneuver to one thing else.

Actual-Time Knowledge Stack with Databricks and Rockset

We wanted a storage layer able to large-scale ML processing for terabytes of latest information per day. We in contrast Snowflake and Databricks, selecting the latter due to Databrick’s compatibility with extra tooling choices and assist for open information codecs. Utilizing Databricks, we now have deployed (beneath) a lakehouse structure, storing and processing our information by means of three progressive Delta Lake levels. Crawled and different uncooked information lands in our Bronze layer and subsequently goes by means of Spark ETL and ML pipelines that refine and enrich the information for the Silver layer. We then create coarse-grained aggregations throughout a number of dimensions, reminiscent of geographical location, job perform, and time, which might be saved within the Gold layer.

We’ve got SLAs on question latency within the low a whole bunch of milliseconds, at the same time as customers make advanced, multi-faceted queries. Spark was not constructed for that – such queries are handled as information jobs that may take tens of seconds. We wanted a real-time analytics engine, one which creates an uber-index of our information so as to ship multidimensional analytics in a heartbeat.

We selected Rockset to be our new user-facing serving database. Rockset repeatedly synchronizes with the Gold layer information and immediately builds an index of that information. Taking the coarse-grained aggregations within the Gold layer, Rockset queries and joins throughout a number of dimensions and performs the finer-grained aggregations required to serve consumer queries. That allows us to serve: 1) pre-defined Question Lambdas sending common information feeds to clients; 2) advert hoc free-text searches reminiscent of “What are the entire distant jobs in america?”

Sub-Second Analytics and Sooner Iterations

After a number of months of growth and testing, we switched our Labor Market Intelligence database from MongoDB to Rockset and Databricks. With Databricks, we now have improved our skill to deal with large datasets in addition to effectively run our ML fashions and different non-time-sensitive processing. In the meantime, Rockset permits us to assist advanced queries on large-scale information and return solutions to customers in milliseconds with little compute price.

As an example, our clients can seek for the highest 20 expertise in any nation on the planet and get outcomes again in close to actual time. We are able to additionally assist a a lot larger quantity of buyer queries, as Rockset alone can deal with thousands and thousands of queries a day, no matter question complexity, the variety of concurrent queries, or sudden scale-ups elsewhere within the system (reminiscent of from bursty incoming information feeds).

We are actually simply hitting all of our buyer SLAs, together with our sub-300 millisecond question time ensures. We are able to present the real-time solutions that our clients want and our rivals can not match. And with Rockset’s SQL-to-REST API assist, presenting question outcomes to functions is simple.

Rockset additionally hurries up growth time, boosting each our inside operations and exterior gross sales. Beforehand, it took us three to 9 months to construct a proof of idea for purchasers. With Rockset options reminiscent of its SQL-to-REST-using-Question Lambdas, we are able to now deploy dashboards custom-made to the potential buyer hours after a gross sales demo.

We name this “product day zero.” We don’t need to promote to our prospects anymore, we simply ask them to go and take a look at us out. They’ll uncover they’ll work together with our information with no noticeable delay. Rockset’s low ops, serverless cloud supply additionally makes it straightforward for our builders to deploy new providers to new customers and buyer prospects.

We’re planning to additional streamline our information structure (above) whereas increasing our use of Rockset into a few different areas:

- geospatial queries, in order that customers can search by zooming out and in of a map;

- serving information to our ML fashions.

These tasks would seemingly happen over the following yr. With Databricks and Rockset, we now have already remodeled and constructed out a gorgeous stack. However there may be nonetheless way more room to develop.