Over the previous few months, curiosity in Giant Language Fashions (LLMs) from Public Sector businesses has skyrocketed as LLMs are essentially altering the expectations that folks have of their interactions with computer systems and knowledge. From Databricks’ viewpoint, virtually each Public Sector buyer and prospect we work together with feels a mandate to inject LLMs into their mission. We repeatedly hear questions on what LLMs (like Databricks’ Dolly) are, what they can be utilized for, and the way the Databricks Lakehouse will help LLM-related purposes. On this put up, we are going to contact on these questions within the context of the distinctive wants, alternatives and constraints of Public Sector organizations. We may even give attention to the advantages of making, proudly owning and curating your personal LLM vs adopting a expertise that requires third celebration knowledge sharing like ChatGPT.

What are LLMs?

Right this moment’s LLMs characterize the most recent model in a collection of improvements in pure language processing, beginning roughly in 2017 with the rise of the transformer mannequin structure. These transformer-based fashions have lengthy possessed uncanny talents to know human language properly sufficient to perform duties comparable to figuring out sentiment; extracting named folks, locations and issues; and translating paperwork from one language to a different. They’ve additionally been able to producing attention-grabbing textual content from a immediate, with various levels of high quality and accuracy. Extra not too long ago, researchers and builders have found that very giant language fashions, “pre-trained” on very giant and various sources of textual content, might be “fine-tuned” to comply with quite a lot of directions from a human to generate helpful data.

Beforehand, one of the best observe was to coach separate fashions for every language-related activity. The mannequin coaching course of required assets: curated knowledge, compute (sometimes a number of GPUs), and superior knowledge science and software program improvement experience. Whereas such fashions might be extremely correct, there are clearly useful resource constraints – each by way of computation and human effort – when scaling up their utilization. With the fast rise of ChatGPT to stardom, we now see {that a} single LLM – with the suitable quantity of context and the correct immediate – can be utilized to ship on many alternative duties, generally with higher accuracy than a extra specialised mannequin. And the LLMs’ skill to generate new textual content – “Generative AI” – is each fascinating and very helpful.

What can LLMs be used for within the Public Sector?

Personal sector organizations have reported wonderful advantages from LLMs, comparable to code technology and migration, automated buyer suggestions categorizations and responses, name heart chatbots, report technology, and rather more. As a microcosm of many alternative industries, Public Sector businesses have the identical LLM alternatives, along with different distinctive wants. Widespread Public Sector use circumstances embrace:

- Regulatory compliance help. With its skill to interpret and course of textual content, an LLM can help in figuring out compliance necessities by analyzing regulatory paperwork, authorized texts, and related case regulation. It could assist authorities businesses and companies perceive the implications of rules and guarantee adherence to the regulation.

- Coaching and schooling assistant. Scale and speed up studying for college students by serving as a digital teacher, answering questions, explaining advanced ideas, retrieving related parts of lecture recordings, or recommending course catalog choices.

- Summarizing and answering questions from technical paperwork. Maybe probably the most ubiquitous LLM-related use case within the Public Sector is to extract information from 1000’s or thousands and thousands of paperwork, together with PDFs and emails, right into a format conducive to shortly discovering related content material primarily based on search standards, then use the related content material to generate summaries or studies.

- Open-source intelligence. LLMs can drastically improve the Intelligence Group’s evaluation of Open Supply Intelligence (OSINT) by processing and analyzing huge quantities of publicly obtainable multilingual data. LLMs can extract key entities, relationships, sentiments, and contextual understanding from various sources comparable to social media, information articles, and studies, then effectively summarize and manage this data, aiding analysts in quickly comprehending and extracting insights from giant volumes of OSINT knowledge.

- Modernizing legacy code bases. Authorities businesses proceed to maneuver knowledge workloads off of mainframes, on-premise knowledge warehouses, and proprietary analytics software program. By placing coding assistants within the arms of builders and analysts to recommend code as they go, or coaching customized LLMs to deal with bulk code conversion, the tempo of migration can speed up whereas information staff gracefully purchase related software program abilities.

- Human assets. Because the nation’s largest employer, the Federal authorities faces distinctive challenges in hiring and making certain worker satisfaction. Leveraging LLMs within the HR subject may also help handle these challenges by automating resume screening, matching candidates to job descriptions, and analyzing worker suggestions to enhance hiring processes and improve workforce engagement. Moreover, LLMs can help in making certain HR coverage compliance, supporting variety and inclusion initiatives, and offering personalised onboarding and profession improvement suggestions.

How will Databricks help the wants of Public Sector organizations in a world powered by LLMs?

Whereas actually highly effective, LLMs additionally introduce a brand new set of challenges that’s amplified by among the working constraints native to Public Sector organizations. Let’s dissect a couple of of those and align them with the Databricks Lakehouse capabilities:

Problem #1: Knowledge sovereignty and governance

The problem

Most Public Sector organizations have strict regulatory controls round their knowledge. These controls exist for privateness, safety, and the necessity to protect secrecy in some circumstances. Even the straightforward activity of asking an LLM a query or set of questions might reveal proprietary data. Moreover, most Federal businesses can have the necessity to fine-tune LLMs to fulfill their specific necessities. For these causes, it is logical to imagine that Public Sector businesses might be restricted of their use of public fashions. It is seemingly that they’re going to require the fashions to be fine-tuned in an setting that ensures their confidentiality and safety, and that interactions with the fashions through varied prompting strategies are additionally confidential.

Databricks’ answer

Databricks’ Lakehouse platform has the instruments essential to develop and deploy end-to-end LLM purposes. (Extra on that later.) Furthermore, Databricks possesses the mandatory certifications to course of knowledge for the overwhelming majority of U.S. Public Sector organizations. Databricks is a trusted and succesful companion for organizations looking for to harness the complete energy of LLMs with out the dangers that come from leveraging proprietary LLMs-as-a-service like ChatGPT or Bard.

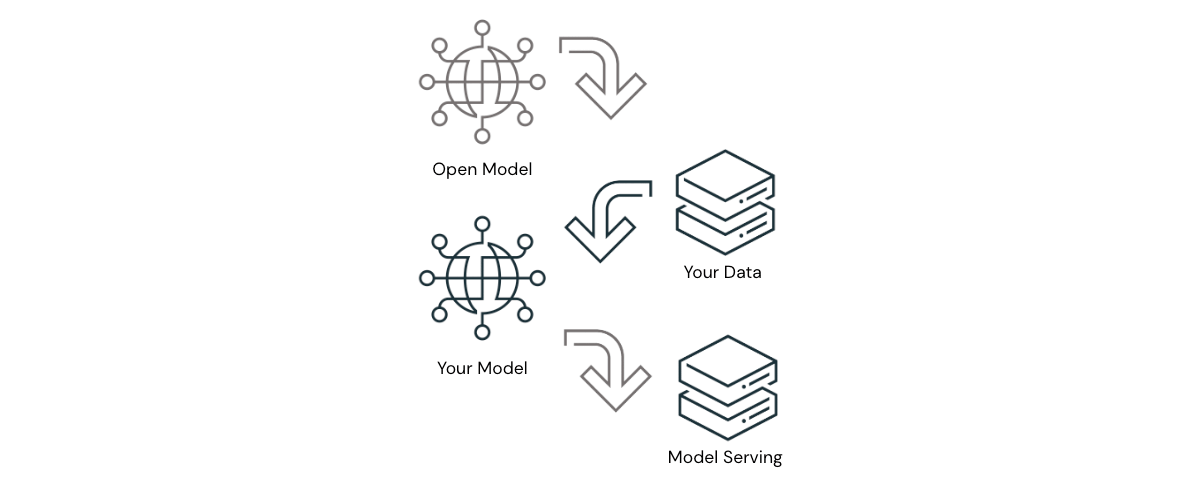

Past Databricks, the business is seeing elevated proof that open-source LLMs – used appropriately – can ship outcomes that method parity with the main proprietary LLMs. The proof is strongest in use circumstances the place the proprietary LLMs should perceive nuanced context or directions on which they haven’t beforehand been educated. In these circumstances, open-source LLMs might be both prompted with or fine-tuned on organization-specific knowledge to ship astounding outcomes. On this answer structure, organizations can obtain world-class outcomes with modest quantities of compute and improvement time, with out knowledge ever leaving accredited boundaries. For Public Sector organizations, this represents a big benefit that can’t be neglected.

Databricks’ perception within the energy of open-source LLMs is bolstered by our releasing Dolly 2.0, the primary open supply, instruction-following LLM, fine-tuned on a human-generated instruction dataset licensed for analysis and industrial use. Dolly’s launch has been adopted by a wave of different succesful open-source LLMs, a few of which have very spectacular efficiency. Databricks strives to provide Public Sector organizations a platform to construct purposes with their LLM of selection – open-source, or industrial – and we’re excited for what’s but to return.

Problem #2: Architectural complexity

The problem

Knowledge property modernization continues to be prime of thoughts for many technical leaders within the Public Sector. Largely gone are the times of on-premise knowledge warehouses, sometimes changed by a knowledge warehouse or lakehouse within the cloud. Organizations that haven’t but migrated to the cloud – or that opted for a knowledge warehouse within the cloud – now face one other inflection level: the best way to undertake LLMs in an structure that may’t accommodate them? Given the immense potential of LLMs to influence businesses’ missions and the general public servants delivering on them, it’s vital to determine a future-proof structure. Enter the lakehouse.

Databricks’ answer

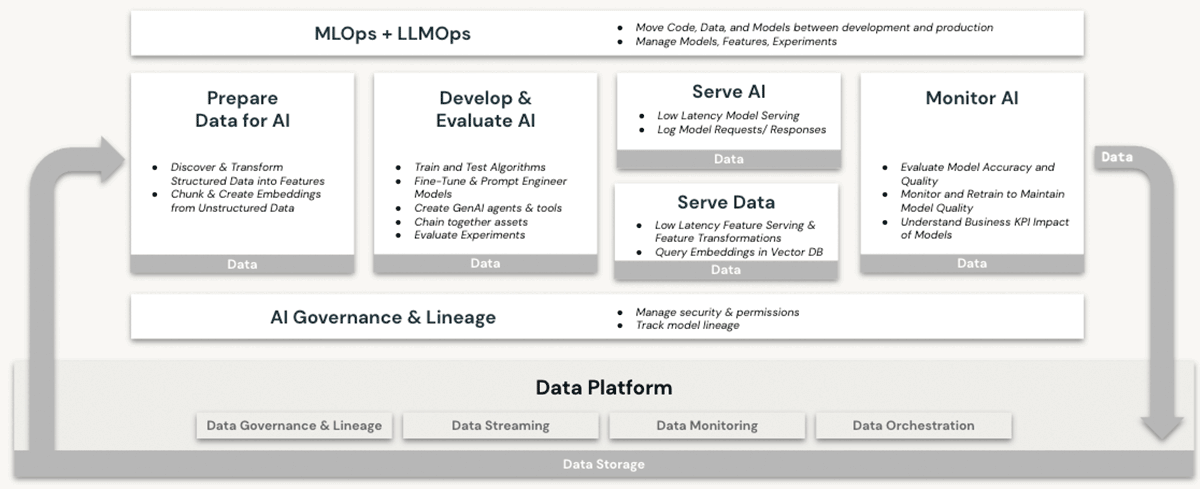

Databricks has lengthy been a succesful dwelling for machine studying (ML) and synthetic intelligence (AI) workloads. Clients have been utilizing production-grade LLMs and their predecessors on Databricks for years, making the most of options comparable to:

- Scalable compute for preprocessing of unstructured knowledge like textual content, photographs and audio

- Entry to the complete suite of open-source ML/AI libraries

- A local best-in-class pocket book improvement setting, with glorious help for IDE integration as properly

- Knowledge governance capabilities through Unity Catalog that guarantee correct entry controls to

- Structured knowledge (databases and tables)

- Unstructured knowledge (information, photographs, paperwork)

- Fashions (LLMs or in any other case)

- GPU compute choices for the coaching of and predictions from ML fashions – now a prerequisite for working with transformer-based LLMs

- Finish-to-end mannequin lifecycle administration with MLflow and Unity Catalog. Fashions are handled as top notch residents, with lineage to their supply knowledge and coaching occasions, and might be deployed in both batch or real-time mode

- Mannequin serving capabilities, which develop into more and more vital as organizations fine-tune, host and deploy their very own LLMs

None of those options are supplied in a knowledge warehouse, even within the cloud. To make use of LLMs at the side of a knowledge warehouse, a company would want to obtain different software program companies for all sides of the mannequin coaching and deployment processes, and ship knowledge forwards and backwards between these companies. Solely the Databricks Lakehouse structure provides the architectural simplicity of performing all LLM operations in a single platform, absolutely delivering on the advantages defined in our dialogue of information sovereignty above.

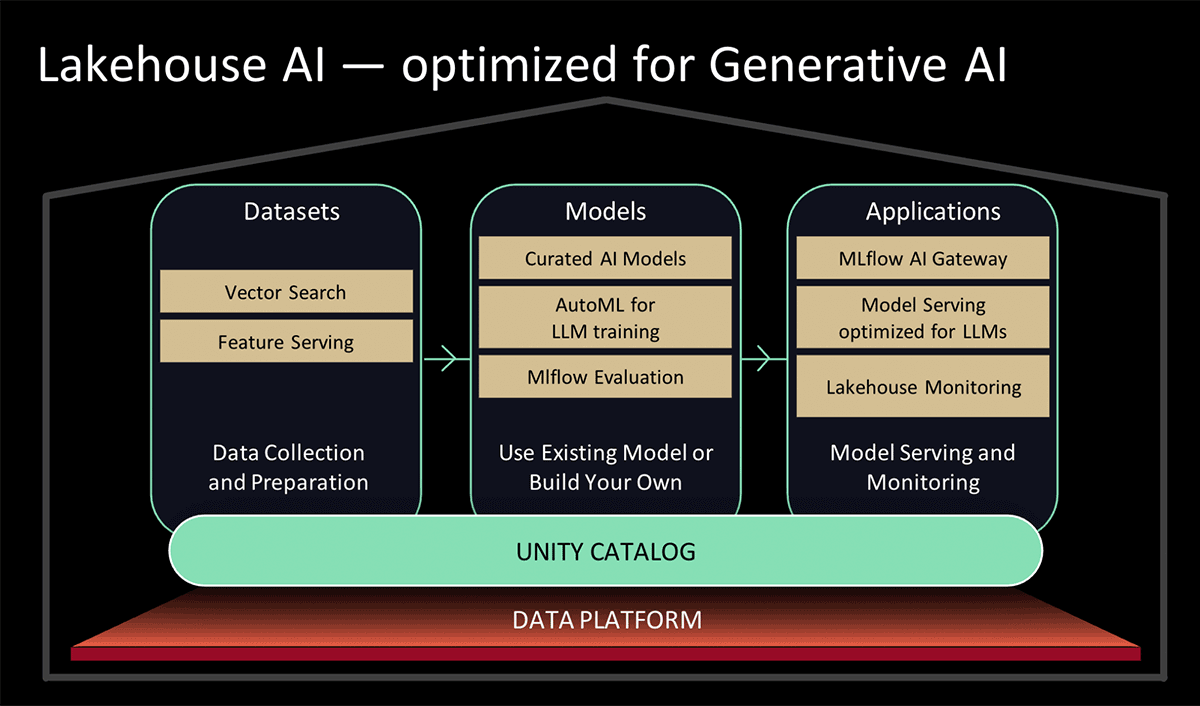

At Knowledge and AI Summit 2023, Databricks introduced Lakehouse AI, which provides a number of main new LLM-related options that considerably simplify the structure for LLMOps, together with:

- Vector Seek for indexing. A Databricks-hosted vector database helps groups shortly index their organizations’ knowledge as embedding vectors and carry out low-latency vector similarity searches in real-time deployments.

- Lakehouse Monitoring. The primary unified knowledge and AI monitoring service that permits customers to concurrently monitor the standard of each their knowledge and AI property.

- AI Capabilities. Knowledge analysts and knowledge engineers can now use LLMs and different machine studying fashions inside an interactive SQL question or SQL/Spark ETL pipeline.

- Unified Knowledge & AI Governance. Enhancements to the Unity Catalog to offer complete governance and lineage monitoring of each knowledge and AI property in a single unified expertise.

- MLflow AI Gateway. The MLflow AI Gateway, a part of MLflow 2.5, is a workspace-level API gateway that permits organizations to create and share routes, which then might be configured with varied fee limits, caching, value attribution, and so forth. to handle prices and utilization.

- MLflow 2.4. This launch offers a complete set of LLMOps instruments for mannequin analysis

Problem #3: Expertise hole

The problem

Authorities businesses have struggled with a persistent “mind drain” lately, notably in roles that overlap with sizzling technological developments comparable to cybersecurity, cloud computing, and ML/AI. The present intense give attention to LLMs is driving much more demand for gifted practitioners in ML/AI. Inevitably, the attract and perks that include employment in massive tech and the startup scene will exacerbate the expertise scarcity within the public sector. Authorities management wants entry to platforms and partnerships that may assist them to simply undertake LLMs and empower their workers to develop into self-sufficient with them.

Databricks’ answer

Databricks is busy rolling out options that simplify and increase upon the present capabilities to work with LLMs within the lakehouse platform. These embrace:

- Simplified patterns for utilizing pre-trained LLMs from Hugging Face for inference duties in knowledge pipelines, or fine-tuning them for higher efficiency by yourself knowledge in Databricks.

- Simplifying the method and enhancing the efficiency of loading knowledge from Apache Spark into Hugging Face mannequin coaching or fine-tuning jobs.

- Business-specific LLM answer accelerators, exhibiting repeatable implementation patterns for fast wins, comparable to customer support analytics and product discovery

- The MLflow 2.3 launch, that includes native LLM help, particularly:

- Three model new mannequin flavors: Hugging Face Transformers, OpenAI features, and LangChain.

- Considerably improved mannequin obtain and add pace to and from cloud companies through multi-part obtain and add for mannequin information.

- A built-in Databricks SQL perform permitting customers to entry LLMs straight from SQL. This function can circumvent prolonged and complicated language mannequin improvement processes by permitting analysts to easily craft efficient LLM prompts

- As introduced at Knowledge & AI Summit 2023,

- Additions to Databricks’ UI-based AutoML service that may fine-tune LLMs for textual content classification in addition to embedding fashions; and

- Curated fashions, backed by optimized Mannequin Serving for top efficiency. Moderately than spending time researching one of the best open supply generative AI fashions on your use case, you’ll be able to depend on fashions curated by Databricks consultants for frequent use circumstances.

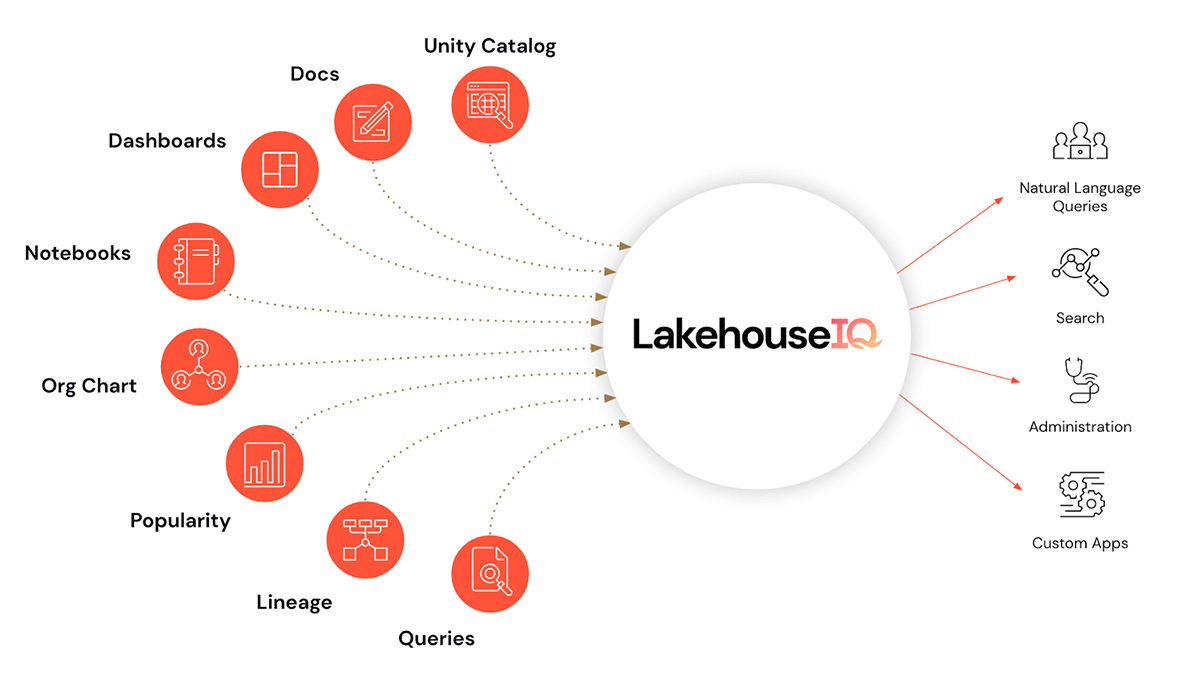

- And maybe the icing on the cake, LakehouseIQ, a information engine that learns the distinctive nuances of your corporation and knowledge to energy pure language entry to it for a variety of use circumstances.

Along with making LLMs simple to make use of in Databricks, we’re additionally introducing LLM coaching and enablement applications to assist organizations scale up their LLM proficiency. These are delivered at a stage that’s approachable for Databricks’ public sector customers.

- Partnering with EdX to ship expert-led on-line programs which can be particularly centered on constructing and utilizing language fashions in trendy purposes

Conclusions and subsequent steps

Alternatives to harness LLMs to speed up Public Sector use circumstances abound. Immense worth stays buried in legacy knowledge, simply ready to be found and utilized to present issues. Come be taught extra about how Databricks may also help you undertake LLMs in your mission by taking part in our webinar Giant Language Fashions within the Public Sector on August 2 at Midday, EDT. Additionally, peruse the function preview signups listed within the Lakehouse AI announcement and see which of them your group qualifies for.