PAIR (Folks + AI Analysis) first launched in 2017 with the assumption that “AI can go a lot additional — and be extra helpful to all of us — if we construct techniques with folks in thoughts in the beginning of the method.” We proceed to deal with making AI extra comprehensible, interpretable, enjoyable, and usable by extra folks all over the world. It’s a mission that’s notably well timed given the emergence of generative AI and chatbots.

In the present day, PAIR is a part of the Accountable AI and Human-Centered Know-how crew inside Google Analysis, and our work spans this bigger analysis house: We advance foundational analysis on human-AI interplay (HAI) and machine studying (ML); we publish instructional supplies, together with the PAIR Guidebook and Explorables (such because the latest Explorable how and why fashions generally make incorrect predictions confidently); and we develop software program instruments just like the Studying Interpretability Device to assist folks perceive and debug ML behaviors. Our inspiration this yr is “altering the best way folks take into consideration what THEY can do with AI.” This imaginative and prescient is impressed by the fast emergence of generative AI applied sciences, similar to giant language fashions (LLMs) that energy chatbots like Bard, and new generative media fashions like Google’s Imagen, Parti, and MusicLM. On this weblog put up, we evaluation latest PAIR work that’s altering the best way we have interaction with AI.

Generative AI analysis

Generative AI is creating plenty of pleasure, and PAIR is concerned in a variety of associated analysis, from utilizing language fashions to create generative brokers to finding out how artists adopted generative picture fashions like Imagen and Parti. These latter “text-to-image” fashions let an individual enter a text-based description of a picture for the mannequin to generate (e.g., “a gingerbread home in a forest in a cartoony type”). In a forthcoming paper titled “The Immediate Artists” (to seem in Creativity and Cognition 2023), we discovered that customers of generative picture fashions try not solely to create stunning pictures, but additionally to create distinctive, revolutionary types. To assist obtain these types, some would even search distinctive vocabulary to assist develop their visible type. For instance, they might go to architectural blogs to study what domain-specific vocabulary they will undertake to assist produce distinctive pictures of buildings.

We’re additionally researching options to challenges confronted by immediate creators who, with generative AI, are primarily programming with out utilizing a programming language. For example, we developed new strategies for extracting semantically significant construction from pure language prompts. Now we have utilized these constructions to immediate editors to supply options much like these present in different programming environments, similar to semantic highlighting, autosuggest, and structured knowledge views.

The expansion of generative LLMs has additionally opened up new methods to unravel essential long-standing issues. Agile classifiers are one strategy we’re taking to leverage the semantic and syntactic strengths of LLMs to unravel classification issues associated to safer on-line discourse, similar to nimbly blocking newer forms of poisonous language as rapidly as it might evolve on-line. The massive advance right here is the power to develop top quality classifiers from very small datasets — as small as 80 examples. This means a constructive future for on-line discourse and higher moderation of it: as an alternative of amassing tens of millions of examples to aim to create common security classifiers for all use circumstances over months or years, extra agile classifiers is likely to be created by people or small organizations and tailor-made for his or her particular use circumstances, and iterated on and tailored within the time-span of a day (e.g., to dam a brand new form of harassment being acquired or to right unintended biases in fashions). For example of their utility, these strategies just lately gained a SemEval competitors to determine and clarify sexism.

We have additionally developed new state-of-the-art explainability strategies to determine the function of coaching knowledge on mannequin behaviors and misbehaviours. By combining coaching knowledge attribution strategies with agile classifiers, we additionally discovered that we are able to determine mislabelled coaching examples. This makes it attainable to cut back the noise in coaching knowledge, resulting in vital enhancements on mannequin accuracy.

Collectively, these strategies are essential to assist the scientific neighborhood enhance generative fashions. They supply methods for quick and efficient content material moderation and dialogue security strategies that assist assist creators whose content material is the idea for generative fashions’ superb outcomes. As well as, they supply direct instruments to assist debug mannequin misbehavior which ends up in higher era.

Visualization and schooling

To decrease obstacles in understanding ML-related work, we often design and publish extremely visible, interactive on-line essays, referred to as AI Explorables, that present accessible, hands-on methods to study key concepts in ML. For instance, we just lately revealed new AI Explorables on the matters of mannequin confidence and unintended biases. In our newest Explorable, “From Confidently Incorrect Fashions to Humble Ensembles,” we focus on the issue with mannequin confidence: fashions can generally be very assured of their predictions… and but utterly incorrect. Why does this occur and what might be performed about it? Our Explorable walks by means of these points with interactive examples and reveals how we are able to construct fashions which have extra acceptable confidence of their predictions by utilizing a method referred to as ensembling, which works by averaging the outputs of a number of fashions. One other Explorable, “Looking for Unintended Biases with Saliency”, reveals how spurious correlations can result in unintended biases — and the way methods similar to saliency maps can detect some biases in datasets, with the caveat that it may be troublesome to see bias when it’s extra refined and sporadic in a coaching set.

|

| PAIR designs and publishes AI Explorables, interactive essays on well timed matters and new strategies in ML analysis, similar to “From Confidently Incorrect Fashions to Humble Ensembles,” which appears to be like at how and why fashions supply incorrect predictions with excessive confidence, and the way “ensembling” the outputs of many fashions might help keep away from this. |

Transparency and the Knowledge Playing cards Playbook

Persevering with to advance our objective of serving to folks to know ML, we promote clear documentation. Prior to now, PAIR and Google Cloud developed mannequin playing cards. Most just lately, we introduced our work on Knowledge Playing cards at ACM FAccT’22 and open-sourced the Knowledge Playing cards Playbook, a joint effort with the Know-how, AI, Society, and Tradition crew (TASC). The Knowledge Playing cards Playbook is a toolkit of participatory actions and frameworks to assist groups and organizations overcome obstacles when establishing a transparency effort. It was created utilizing an iterative, multidisciplinary strategy rooted within the experiences of over 20 groups at Google, and comes with 4 modules: Ask, Examine, Reply and Audit. These modules include quite a lot of assets that may enable you customise Knowledge Playing cards to your group’s wants:

- 18 Foundations: Scalable frameworks that anybody can use on any dataset sort

- 19 Transparency Patterns: Proof-based steering to supply high-quality Knowledge Playing cards at scale

- 33 Participatory Actions: Cross-functional workshops to navigate transparency challenges for groups

- Interactive Lab: Generate interactive Knowledge Playing cards from markdown within the browser

The Knowledge Playing cards Playbook is accessible as a studying pathway for startups, universities, and different analysis teams.

Software program Instruments

Our crew thrives on creating instruments, toolkits, libraries, and visualizations that develop entry and enhance understanding of ML fashions. One such useful resource is Know Your Knowledge, which permits researchers to check a mannequin’s efficiency for varied eventualities by means of interactive qualitative exploration of datasets that they will use to search out and repair unintended dataset biases.

Lately, PAIR launched a brand new model of the Studying Interpretability Device (LIT) for mannequin debugging and understanding. LIT v0.5 supplies assist for picture and tabular knowledge, new interpreters for tabular function attribution, a “Dive” visualization for faceted knowledge exploration, and efficiency enhancements that permit LIT to scale to 100k dataset entries. You will discover the launch notes and code on GitHub.

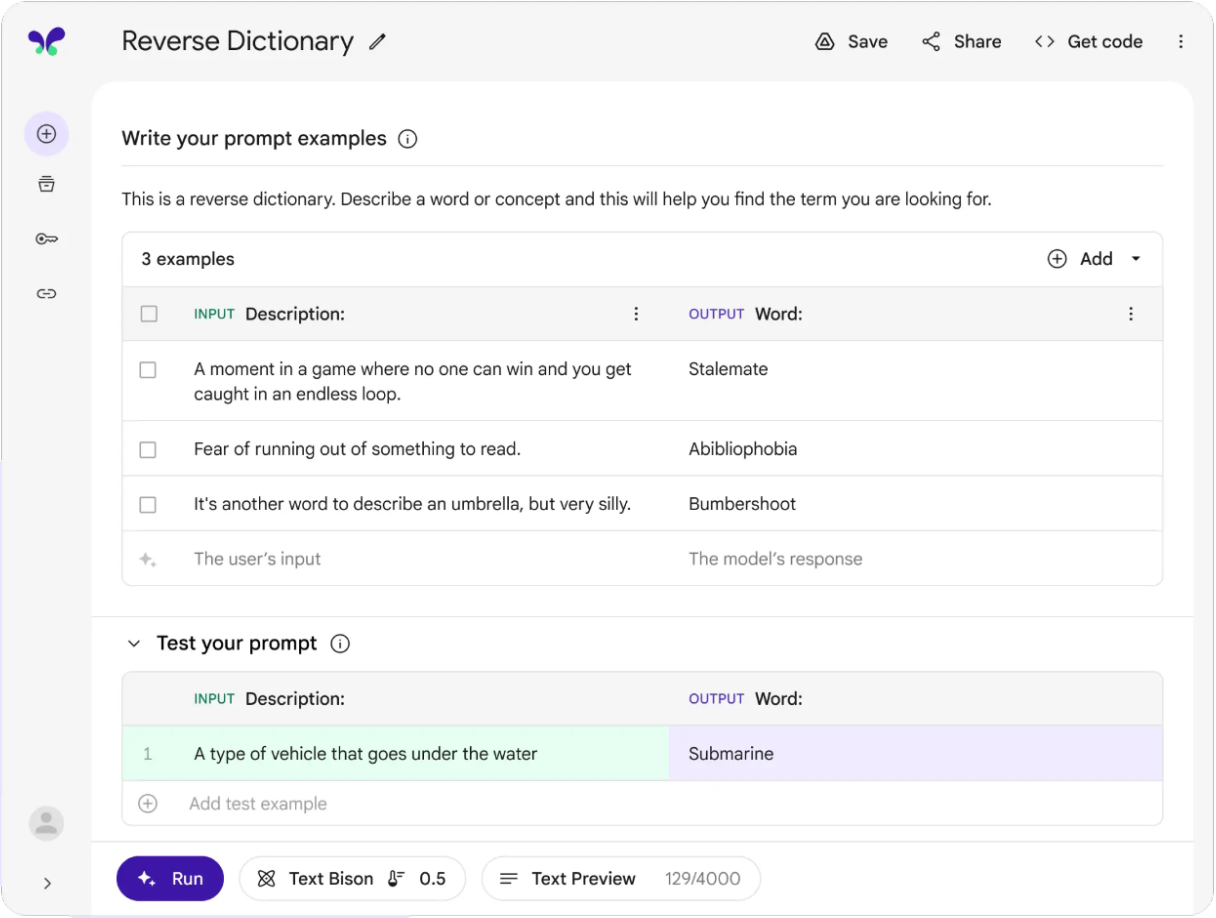

PAIR has additionally contributed to MakerSuite, a device for fast prototyping with LLMs utilizing immediate programming. MakerSuite builds on our earlier analysis on PromptMaker, which gained an honorable point out at CHI 2022. MakerSuite lowers the barrier to prototyping ML purposes by broadening the categories of people that can creator these prototypes and by shortening the time spent prototyping fashions from months to minutes.

|

| A screenshot of MakerSuite, a device for quickly prototyping new ML fashions utilizing prompt-based programming, which grew out of PAIR’s immediate programming analysis. |

Ongoing work

Because the world of AI strikes rapidly forward, PAIR is worked up to proceed to develop new instruments, analysis, and academic supplies to assist change the best way folks take into consideration what THEY can do with AI.

For instance, we just lately carried out an exploratory examine with 5 designers (introduced at CHI this yr) that appears at how folks with no ML programming expertise or coaching can use immediate programming to rapidly prototype useful consumer interface mock-ups. This prototyping pace might help inform designers on the right way to combine ML fashions into merchandise, and allows them to conduct consumer analysis sooner within the product design course of.

Primarily based on this examine, PAIR’s researchers constructed PromptInfuser, a design device plugin for authoring LLM-infused mock-ups. The plug-in introduces two novel LLM-interactions: input-output, which makes content material interactive and dynamic, and frame-change, which directs customers to totally different frames relying on their pure language enter. The result’s extra tightly built-in UI and ML prototyping, all inside a single interface.

Latest advances in AI characterize a big shift in how straightforward it’s for researchers to customise and management fashions for his or her analysis targets and objectives.These capabilities are reworking the best way we take into consideration interacting with AI, and so they create a lot of new alternatives for the analysis neighborhood. PAIR is worked up about how we are able to leverage these capabilities to make AI simpler to make use of for extra folks.

Acknowledgements

Because of everybody in PAIR, to Reena Jana and to all of our collaborators.