Video and all of its transferring elements generally is a lot for a developer to deal with. An knowledgeable developer’s deep understanding of knowledge buildings, encoding strategies, and picture and sign processing performs a serious position within the outcomes of allegedly easy, on a regular basis video processing duties similar to compression or modifying.

To work successfully with video content material, it’s essential to perceive the properties and distinctions between its major file codecs (e.g., .mp4, .mov, .wmv, .avi) and their version-specific codecs (e.g., H.264, H.265, VP8, VP9). The instruments obligatory for efficient video processing are seldom neatly packaged as complete libraries, leaving the developer to navigate the huge, intricate ecosystem of open-source instruments to ship participating pc imaginative and prescient functions.

Pc Imaginative and prescient Purposes Defined

Pc imaginative and prescient functions are based mostly on the implementation of the spectrum of strategies—from easy heuristics to advanced neural networks—by which we feed a picture or video to a pc as enter and produce significant output, similar to:

- Facial recognition options in smartphone cameras, helpful for organizing and looking out photograph albums and for tagging people in social media apps.

- Highway marking detection, as applied in self-driving vehicles transferring at excessive speeds.

- Optical character recognition know-how that permits visible search apps (like Google Lens) to acknowledge the shapes of textual content characters in pictures.

The previous examples are as completely different as could be, every showcasing a wholly distinctive operate, however sharing one easy commonality: Photographs are their major enter. Every software transforms unstructured—typically chaotic—photos or frames into intelligible and ordered information that gives a profit to finish customers.

Measurement Issues: Frequent Challenges of Working With Video

An finish person who views a video could regard it as a single entity. However a developer should method it as a group of particular person, sequential frames. For instance, earlier than an engineer writes a program to detect real-time visitors patterns in a video of transferring automobiles, they need to first extract particular person frames from that video, after which apply an algorithm that detects the vehicles on the highway.

In its uncooked state, a video file is big in measurement, making it too giant to carry in a pc’s reminiscence, unwieldy for the developer to deal with, troublesome to share, and dear to retailer. A single minute of 60 frames per second (fps) uncooked, uncompressed video requires greater than 22 gigabytes of space for storing, for instance:

60 seconds * 1080 px (top) * 1920 px (width) * 3 bytes per pixel * 60 fps = 22.39 GB

Video is, due to this fact, compressed earlier than it’s processed, as a matter in fact. However there isn’t any assure that a person compressed video body will show a picture in its entirety. It is because the parameters utilized at compression time outline the standard and element a video’s particular person body will retain. Whereas the compressed video, as a complete, could play effectively sufficient to offer a terrific viewing expertise, that’s not the identical as the person frames comprising it being interpretable as full photos.

On this tutorial, we are going to use in style open-source pc imaginative and prescient instruments to resolve some primary challenges of video processing. This expertise will place you to customise a pc imaginative and prescient pipeline to your precise use circumstances. (To maintain issues easy, we is not going to describe the audio elements of video on this article.)

A Easy Pc Imaginative and prescient App Tutorial: Calculating Brightness

To ship a pc imaginative and prescient software, an engineering crew develops and implements an environment friendly and highly effective pc imaginative and prescient pipeline whose structure contains, at a minimal:

|

Step 1: Picture acquisition |

Photographs or movies could be acquired from a variety of sources, together with cameras or sensors, digital movies saved on disk, or movies streamed over the web. |

|

Step 2: Picture preprocessing |

The developer chooses preprocessing operations, similar to denoising, resizing, or conversion right into a extra accessible format. These are supposed to make the pictures simpler to work with or analyze. |

|

Step 3: Function extraction |

Within the illustration or extraction step, data within the preprocessed photos or frames is captured. This data could include edges, corners, or shapes, as an illustration. |

|

Step 4: Interpretation, evaluation, or output |

Within the closing step we accomplish the duty at hand. |

Let’s think about you had been employed to construct a software that calculates the brightness of a video’s particular person frames. We’ll align the mission’s pipeline structure to match the straightforward pc imaginative and prescient mannequin shared above.

This system we are going to produce on this tutorial has been included as an instance inside Hypetrigger, an open-source Rust library I developed. Hypetrigger contains every part you’d have to run a pc imaginative and prescient pipeline on streaming video from the web: TensorFlow bindings for picture recognition, Tesseract for optical character recognition, and assist for utilizing GPU-accelerated video decoding for a 10x velocity increase. To put in, clone the Hypetrigger repo and run the command cargo add hypetrigger.

To maximise the training and expertise to be gained, we are going to assemble a pc imaginative and prescient pipeline from scratch on this tutorial, fairly than implementing user-friendly Hypetrigger.

Our Tech Stack

For our mission, we are going to use:

|

Device |

Description |

|---|---|

|

Touted as the most effective instruments on the market for working with video, FFmpeg—the Swiss Military knife of video—is an open-source library written in C and used for encoding, decoding, conversion, and streaming. It’s utilized in enterprise software program like Google Chrome, VLC Media Participant, and Open Broadcast Software program (OBS), amongst others. FFmpeg is obtainable for obtain as an executable command-line software or a supply code library, and can be utilized with any language that may spawn youngster processes.

|

|

|

A serious power of Rust is its capability to detect reminiscence errors (e.g., null pointers, segfaults, dangling references) at compile time. Rust presents excessive efficiency with assured reminiscence security, and can also be extremely performant, making it a good selection for video processing. |

Step 1: Picture Acquisition

On this state of affairs, a beforehand acquired animated pattern video is able to be processed.

Step 2: Picture Preprocessing

For this mission, picture preprocessing consists of changing the video from its H.264 encoded format to uncooked RGB, a format that’s a lot simpler to work with.

Let’s decompress our video utilizing FFmpeg’s moveable, executable command-line software from inside a Rust program. The Rust program will open and convert our pattern video to RGB. For optimum outcomes, we’ll append the suitable FFmpeg syntax to the ffmpeg command:

|

Argument* |

Description |

Use Case |

|---|---|---|

|

|

Signifies the file title or URL of the supply video. |

|

|

|

Units the output format. |

The |

|

|

Units the pixel format. |

|

|

|

Units the output body fee. |

|

|

|

Tells FFmpeg the place to ship output; it’s a required closing argument. |

*For a whole display itemizing of arguments, enter ffmpeg -help.

These arguments mixed on the command line or terminal give us ffmpeg -i input_video.mp4 -f rawvideo -pix_fmt rgb24 pipe:1 and function our start line to course of the video’s frames:

use std::{

io::{BufReader, Learn},

course of::{Command, Stdio},

};

fn predominant() {

// Take a look at video supplied by https://gist.github.com/jsturgis/3b19447b304616f18657.

let test_video =

"http://commondatastorage.googleapis.com/gtv-videos-bucket/pattern/BigBuckBunny.mp4";

// Video is in RGB format; 3 bytes per pixel (1 purple, 1 blue, 1 inexperienced).

let bytes_per_pixel = 3;

let video_width = 1280;

let video_height = 720;

// Create an FFmpeg command with the desired arguments.

let mut ffmpeg = Command::new("ffmpeg")

.arg("-i")

.arg(test_video) // Specify the enter video

.arg("-f") // Specify the output format (uncooked RGB pixels)

.arg("rawvideo")

.arg("-pix_fmt")

.arg("rgb24") // Specify the pixel format (RGB, 8 bits per channel)

.arg("-r")

.arg("1") // Request fee of 1 body per second

.arg("pipe:1") // Ship output to the stdout pipe

.stderr(Stdio::null())

.stdout(Stdio::piped())

.spawn() // Spawn the command course of

.unwrap(); // Unwrap the consequence (i.e., panic and exit if there was an error)

}

Our program will obtain one video body at a time, every decoded into uncooked RGB. To keep away from accumulating big volumes of knowledge, let’s allocate a frame-sized buffer that can launch reminiscence because it finishes processing every body. Let’s additionally add a loop that fills the buffer with information from FFmpeg’s normal output channel:

fn predominant() {

// …

// Learn the video output right into a buffer.

let stdout = ffmpeg.stdout.take().unwrap();

let buf_size = video_width * video_height * bytes_per_pixel;

let mut reader = BufReader::new(stdout);

let mut buffer = vec![0u8; buf_size];

let mut frame_num = 0;

whereas let Okay(()) = reader.read_exact(buffer.as_mut_slice()) {

// Retrieve every video body as a vector of uncooked RGB pixels.

let raw_rgb = buffer.clone();

}

}

Discover that the whereas loop comprises a reference to raw_rgb, a variable which comprises a full RGB picture.

To calculate the common brightness of every body preprocessed in Step 2, let’s add the next operate to our program (both earlier than or after the predominant technique):

/// Calculate the common brightness of a picture,

/// returned as a float between 0 and 1.

fn average_brightness(raw_rgb: Vec<u8>) -> f64 {

let mut sum = 0.0;

for (i, _) in raw_rgb.iter().enumerate().step_by(3) {

let r = raw_rgb[i] as f64;

let g = raw_rgb[i + 1] as f64;

let b = raw_rgb[i + 2] as f64;

let pixel_brightness = (r / 255.0 + g / 255.0 + b / 255.0) / 3.0;

sum += pixel_brightness;

}

sum / (raw_rgb.len() as f64 / 3.0)

}

Then, on the finish of the whereas loop, we will calculate and print the frames’ brightness to the console:

fn predominant() {

// …

whereas let Okay(()) = reader.read_exact(buffer.as_mut_slice()) {

// Retrieve every video body as a vector of uncooked RGB pixels.

let raw_rgb = buffer.clone();

// Calculate the common brightness of the body.

let brightness = average_brightness(raw_rgb);

println!("body {frame_num} has brightness {brightness}");

frame_num += 1;

}

}

The code, at this level, will match this instance file.

And now we run this system on our pattern video to supply the next output:

body 0 has brightness 0.055048076377046

body 1 has brightness 0.467577447011064

body 2 has brightness 0.878193112575386

body 3 has brightness 0.859071674156269

body 4 has brightness 0.820603467400872

body 5 has brightness 0.766673757205845

body 6 has brightness 0.717223347005918

body 7 has brightness 0.674823835783496

body 8 has brightness 0.656084418402863

body 9 has brightness 0.656437488652946

[500+ more frames omitted]

Step 4: Interpretation

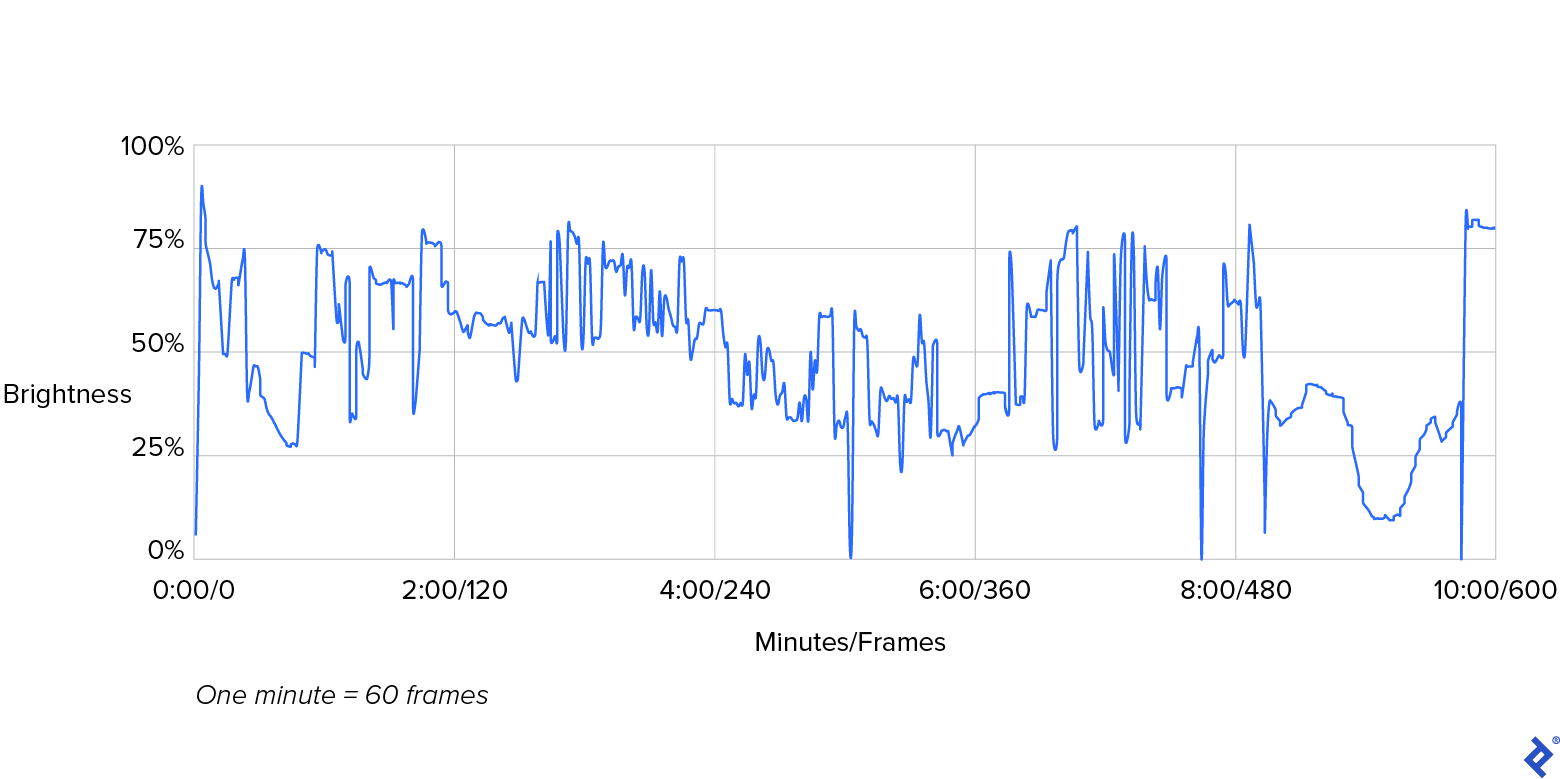

Right here’s a graphical illustration of those numbers:

Within the previous graph, observe the plotted line that represents our video’s brightness. Its sharp peaks and valleys characterize the dramatic transitions in brightness that happen between consecutive frames. The brightness of body 0, depicted on the graph’s far left, measures at 5% (i.e., fairly darkish) and peaks sharply at 87% (i.e., remarkably vivid), simply two frames later. Equally outstanding transitions happen round 5:00, 8:00, and 9:40 minutes into the video. On this case, such intense variations in brightness characterize regular film scene transitions, as seen within the video.

Actual-world Use Circumstances for Calculating Brightness

In the true world, we might seemingly proceed to research the brightness ranges detected and, conditionally, set off an motion. In true postproduction processing, the filmmaker, videographer, or video editor would analyze this information and retain all frames whose values for brightness fall inside the mission’s agreed-upon vary. Alternatively, an expert could pull and overview frames whose brightness values are iffy, and should in the end approve, re-render, or exclude particular person frames from the video’s closing output.

One other fascinating use case for analyzing body brightness could be illustrated by contemplating a state of affairs that entails safety digicam footage from an workplace constructing. By evaluating the frames’ brightness ranges to the constructing’s in/out logs, we will decide whether or not the final particular person to depart really shuts off the lights as they’re speculated to. If our evaluation signifies that lights are being left on in any case folks have gone for the day, we might ship reminders encouraging of us to show off the lights once they go away with a purpose to preserve vitality.

This tutorial particulars some primary pc imaginative and prescient processing and lays the inspiration for extra superior strategies, similar to graphing a number of options of the enter video to correlate utilizing extra superior statistical measures. Such evaluation marks a crossing from the world of video into the area of statistical inference and machine studying—the essence of pc imaginative and prescient.

By following the steps specified by this tutorial and leveraging the instruments introduced, you may decrease the obstacles (giant file sizes or sophisticated video codecs) that we generally affiliate with decompressing video and decoding RGB pixels. And once you’ve simplified working with video and pc imaginative and prescient, you may higher deal with what issues: delivering clever and sturdy video capabilities in your functions.

The editorial crew of the Toptal Engineering Weblog extends its gratitude to Martin Goldberg for reviewing the code samples and different technical content material introduced on this article.